April 7, 2026

How Trust Determines the Success of Enterprise AI Initiatives

Contents

In this article, you will discover:

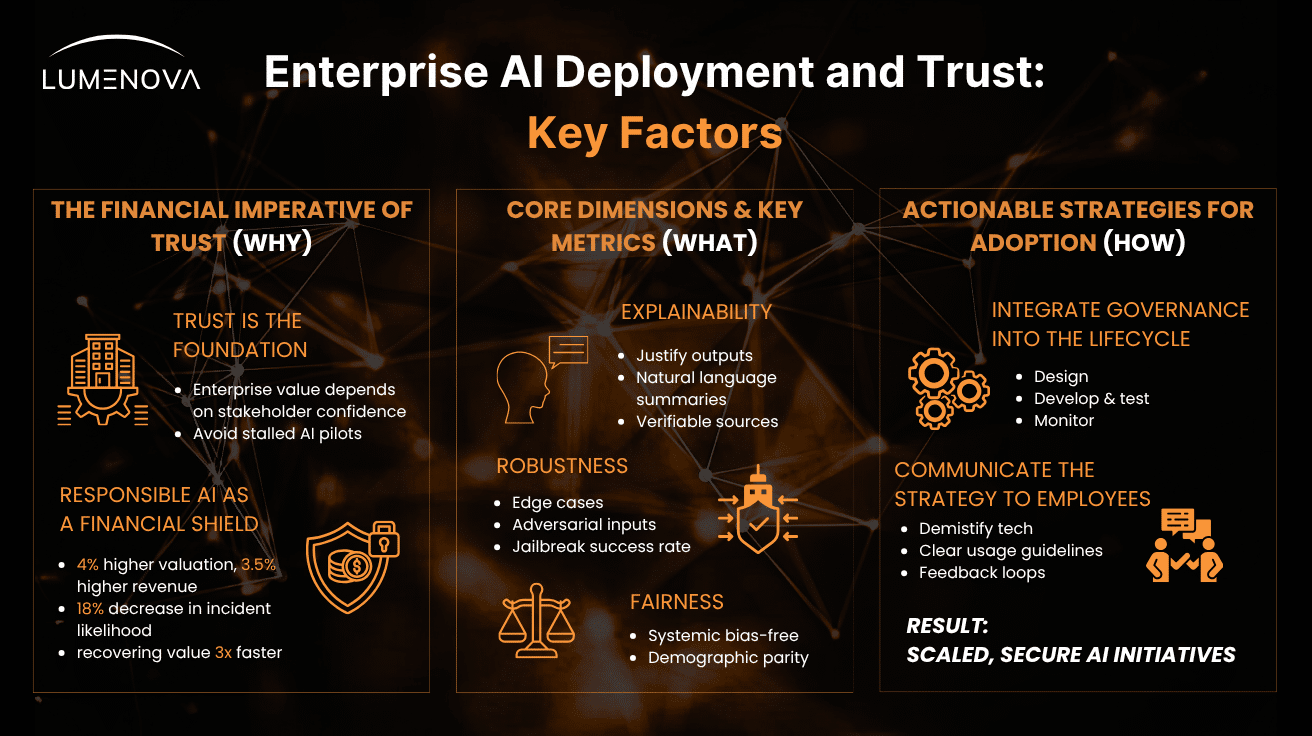

- The Financial Imperative of Trust: Why enterprise confidence is the fundamental currency for scaling AI, and how AI mishaps threaten market capitalization much like major cybersecurity breaches.

- The ROI of Responsible AI (RAI): How robust RAI programs act as a financial shield, driving higher valuations, preventing incidents, and enabling companies to recover market value and employee trust significantly faster after a crisis.

- How to Operationalize Trust: The core dimensions (Explainability, Robustness, Fairness) and specific product metrics (Hallucination Rates, Jailbreak Success) needed to quantify machine predictability and safety.

- Actionable Strategies for Building Trust: Practical steps for weaving AI governance directly into the machine learning lifecycle and effectively communicating safety guardrails to empower your human workforce.

Trust is the fundamental social glue of human existence. Interpersonally, it can be defined as the willingness to make yourself vulnerable to another person based on the positive expectation that they will not harm you and will likely support you. It is the mechanism that allows us, people, to form relationships, build communities, cooperate in large groups, and navigate a world where we cannot possibly control everything.

While human trust is a complex blend of logic, emotion, and instinct, machine trust is about mathematical predictability and risk mitigation.

In the modern corporate landscape, the intersection of these two concepts has become a critical focal point for leadership. When we examine the relationship between AI deployment and trust, we are no longer just evaluating whether a model functions accurately; we are assessing whether stakeholders (employees, executives, customers, and regulators) feel secure relying on its outputs.

Establishing trust in enterprise AI systems requires a robust framework of transparency, governance, and ethical alignment. Without this foundational confidence, even the most technologically advanced and well-funded AI initiatives are destined to stall in the pilot phase, unable to scale or deliver their promised return on investment. And many enterprise AI pilots are, indeed, stalling. How to address this ever-growing issue? The answer is in RAI. But let’s first discuss the “why”.

The Enterprise Value of Responsible AI Deployment

Cyber Resilience as a Competitive Advantage

To understand the financial imperative of establishing trust in artificial intelligence, leaders must look at the historical precedent set by cybersecurity incidents. Over the past decade, we have repeatedly seen how catastrophic digital failures erode public confidence and directly knock down companies’ market value.

Consider the 2017 Equifax data breach. Hackers exploited a known, unpatched vulnerability to steal the personal information of 147 million consumers. The breach itself was damaging, but the ensuing erosion of public trust, fueled by a delayed, month-long public disclosure and reports of executives selling stock, was devastating. Equifax shares plummeted nearly 35% in the following weeks, erasing roughly $5 billion in market capitalization, and the company was ultimately hit with $700 million in fines and settlements.

More recent incidents tell a similar story of operational and reputational damage. The 2023 ransomware attack on MGM Resorts, initiated by a social engineering gang, forced the shutdown of hotel, website, and ATM systems for 10 days. The massive disruption to guests and theft of personal data triggered sharp stock volatility and cost the company an estimated $100 million in lost revenue.

Even when a company’s stock valuation proves somewhat resilient, as was the case with UnitedHealth Group following the 2024 Change Healthcare ransomware attack, the fallout is severe. That attack crippled medical billing and pharmacy processing across the U.S., creating a critical healthcare situation and leading to projected financial losses of up to $2.9 billion for the year.

These cybersecurity crises highlight a fundamental business reality: cyber resilience is a massive competitive advantage precisely because it safeguards consumer trust. As organizations transition to the age of algorithmic decision-making, the relationship between AI deployment and trust must be viewed through the exact same lens.

Responsible AI: Trust as a Financial Shield and Engine for Recovery

According to extensive research and simulations conducted by PwC, trust is not just a soft metric; it is a profound business driver that accounts for a remarkable 31% of the variance in performance among companies. The data clearly shows that treating AI governance as a mere compliance exercise leaves money on the table. Companies that invest in a robust, comprehensive Responsible AI (RAI) program see valuations up to 4% higher and revenues up to 3.5% higher than their compliance-only peers.

Furthermore, proactive RAI investment acts as a powerful shield. A mere 3 percentage point increase in RAI spending decreases the likelihood of an AI incident by approximately 18%. This prevention is critical because the financial blow from a severe AI mishap can be devastating, potentially wiping out up to 50% of a company’s value within the first two weeks. To put that liability into perspective, major cybersecurity incidents over the past year typically knocked company stock values down by 15% to 18% immediately post-incident.

However, the true enterprise value of a Responsible AI framework is most evident in how an organization bounces back from adversity. Shortly after an AI incident, the trajectories of RAI-invested companies and compliance-only companies sharply diverge. A PwC simulation reveals that organizations with comprehensive RAI programs recover 90% of their pre-incident value in just seven weeks, and 95% within 13 months. Conversely, organizations lacking substantial RAI infrastructure take more than three times longer (25 weeks) just to hit the 90% recovery mark, and they fail to ever reach 95% within the modeled period.

Interestingly, the engine driving this rapid recovery is internal. While public trust takes time to mend across the board, employee trust within an RAI-adopting company recovers twice as fast following an incident. Because their workers feel secure in the organization’s ethical guardrails, their use of AI tools returns to pre-incident levels 30% faster. These companies also find it notably easier to retain and attract top-tier AI talent after a mishap. Ultimately, organizations with solid RAI foundations don’t just recover; they evolve, eventually seeing their employee trust and personnel quality exceed pre-incident levels by roughly 5%.

How Can Trust in Enterprise AI Be Operationalized

Building trust in enterprise AI systems is one of the most complex challenges organizations face today. As LLMs and humans both agree, trust isn’t a single mathematical absolute or a simple dashboard KPI. Rather, it is a byproduct of a system demonstrating consistent, reliable, and fair behavior under uncertainty.

To move trust from an abstract principle to a tangible reality, it must be operationalized into workflows and quantified through a mix of technical, product, and risk-based metrics. Here is how leading enterprise frameworks and research institutions approach this challenge.

5 Core Dimensions of Trust for Enterprise AI Deployment

To systematically build trust, organizations typically break it down into a framework of measurable dimensions. Trust in AI deployments is generally operationalized across five pillars:

1. Explainability

Can the system justify its outputs to non-technical stakeholders? This involves providing natural-language summaries or highlighting the specific data points that most influenced the decision.

2. Robustness

Does the model perform reliably when exposed to edge cases, degraded data, or adversarial inputs?

3. Fairness

Are the outputs consistently free from systemic bias against specific groups or demographics?

4. Security & Integrity

Are the prompts, training data, and model weights protected from tampering, data leakage, and unauthorized access?

5. Accountability & Governance

Is there a clear human owner for the model? Is there a traceable audit trail and an established escalation path when anomalies occur?

Key Metrics for Measuring Trust in AI Systems

While human trust is subjective, the trustworthiness of an AI system can be measured. Relying solely on standard accuracy metrics (like F1-scores or Precision/Recall) is insufficient for enterprise trust. You must measure how the model behaves in its operational context.

Product and Safety Metrics

To translate the abstract concept of trust into measurable operational standards, organizations must rigorously track a core set of product and safety metrics:

- Hallucination & Factuality Rates: Evaluated against baseline “golden datasets” to measure how often the AI confidently invents false information.

- Bias & Fairness Metrics: Tracking demographic parity or equalized odds to ensure the model’s error rates do not disproportionately impact specific user subgroups.

- Adversarial / Jailbreak Success Rate: The percentage of times a system successfully bypasses its own safety guardrails when intentionally provoked by users.

- Explainability Coverage: The frequency with which the AI successfully provides valid citations, verifiable sources, or logical anchors for its recommendations.

Risk-Based Quantification

Researchers at the Lamarr Institute for Machine Learning and Artificial Intelligence have adapted traditional engineering risk frameworks, like Failure Mode and Effects Analysis (FMEA), to AI. By assessing the Occurrence, Significance, and Detection probability of system failures across the core pillars of Fairness, Robustness, Integrity, Explainability, and Safety (the FRIES framework), organizations can calculate a quantifiable risk score. This allows teams to prioritize which vulnerabilities pose the greatest threat to user confidence.

User-Centric Metrics

Ultimately, trust is revealed through human behavior. Organizations quantify this by tracking:

- Reliance Rates: How often do users accept the AI’s recommendation versus manually overriding it?

- UX Trust Signals: Qualitative user experience (UX) studies and surveys that gauge end-user confidence in the system’s outputs over time.

How to Build Trust in Enterprise AI Systems

Understanding the financial and operational value of trust is only the first step; the true challenge lies in operationalizing it. Building lasting confidence in algorithmic systems requires a shift away from reactive compliance toward proactive, continuous risk management. To bridge the gap between ambitious AI deployment and trust, organizations must focus on two fundamental pillars: integrating governance directly into the AI lifecycle and transparently communicating these guardrails to their teams.

Integrating AI Governance into the ML Lifecycle

Trust cannot be retrofitted into an AI model just before it goes live. If governance is treated as a final checkbox, it will inevitably act as a bottleneck, delaying time-to-market and frustrating engineering teams. Instead, Responsible AI (RAI) principles must be woven into the fabric of the entire machine learning lifecycle.

- Design and Ideation: Before a single line of code is written, teams must define the intended use case, assess potential risks, and determine the ethical boundaries of the application. This involves establishing clear baseline metrics for fairness, safety, and factuality.

- Development and Testing: During the training phase, models must be rigorously tested against the product and safety metrics mentioned earlier, such as hallucination rates and adversarial jailbreaks. Red-teaming (intentionally trying to break or bias the model) should be a standard practice to expose vulnerabilities before deployment.

- Continuous Monitoring: Machine learning models are not static software; they degrade and drift as they interact with real-world data. Continuous, automated monitoring is essential to ensure that the AI remains aligned with its original safety parameters over time. If a model begins to exhibit biased behavior or factuality issues, governance protocols should automatically trigger alerts or fallback mechanisms.

By embedding governance directly into the CI/CD (Continuous Integration/Continuous Deployment) pipeline, organizations transform risk management from a bureaucratic hurdle into an engineering standard.

Communicating the AI Strategy and Guardrails to the Team

Even the most technologically secure AI system will fail if the human workforce refuses to use it. As the data shows, internal trust is the engine of AI adoption and post-incident recovery. Overcoming the “black box” anxiety requires clear, consistent, and honest communication from leadership.

- Demystify the Technology: Leadership must articulate exactly what the AI system is designed to do, how it makes decisions, and (crucially) what its limitations are. Employees need to understand that AI is a tool to augment their capabilities, not an infallible replacement for human judgment.

- Establish Clear Usage Guidelines: Ambiguity breeds mistrust. Provide comprehensive, easy-to-understand documentation on how employees should interact with the AI. This includes clear instructions on what types of data are safe to input, how to verify AI-generated outputs, and the protocols for reporting erratic model behavior.

- Create Feedback Loops: Trust is a two-way street. Create open, frictionless channels for employees to report algorithmic bias, interface issues, or workflow friction. When end-users feel that their oversight is valued and actively shapes the system’s guardrails, they transition from skeptical bystanders to invested stakeholders.

Conclusion: Securing the Future of Your AI Initiatives

Trust is no longer a soft, intangible metric; it is the foundational currency of the AI era. As we have seen, the relationship between AI deployment and trust dictates whether an initiative scales to deliver massive enterprise value or collapses under the weight of security incidents, reputational damage, and internal resistance. Treat AI governance as a strategic shield and a growth engine, rather than a mere compliance exercise, and your organization will be equipped to innovate fearlessly.

However, operationalizing this level of trust is complex. Tracking hallucination rates, ensuring demographic parity, and maintaining continuous governance across multiple models requires specialized infrastructure.

This is where Lumenova AI bridges the gap. The Lumenova AI Responsible AI Platform empowers enterprises to automate, measure, and scale trust across their entire AI lifecycle. By providing centralized governance frameworks, automated risk assessments, and real-time monitoring of critical safety metrics, Lumenova AI ensures that your models remain ethical, compliant, and deeply trusted by all stakeholders, from your engineers to your executive board.

Don’t let a lack of trust stall your AI transformation.

Ready to turn Responsible AI into your greatest competitive advantage? Book a discovery call today to see how the Lumenova AI platform can secure and accelerate your enterprise AI initiatives.

→ Follow us on LinkedIn to join the conversation and stay up-to-date on the latest insights in AI governance and deployment.