March 10, 2026

How AI Observability Improves Model Performance Tracking (and Detects Model Drift Early)

Contents

AI observability (the practice of continuously monitoring and explaining model behavior in production) improves model performance tracking by replacing periodic audits with real-time telemetry. It collects AI-specific data points, including token usage, model drift signals, and response quality metrics, and organizes them into actionable visibility.

That is the short answer. But the reason it matters this much right now is that part goes a little deeper & takes us back to a quote from W. Edwards Deming. Are you familiar with Deming? The quality expert who helped reconstruct postwar Japan. He had a phrase that should be invisibly etched on every MLOps engineer’s forearm: “In God we trust. All others must bring data.”

Deming never diagnosed AI but rather diagnosed human beings. He recognized that humans are designed to misperceive, having confidence in what is true. For example, the dashboard indicates latencies are good, and the demos elicited approvals; therefore, the model should work! But in actuality, all we are doing is making guesses about the AI model, and the model has also made a guess.

Zoom ahead to March 2026: AI now forms the critical backbone of all enterprise operations (logistics, underwriting, triage, hiring). The evolution of humans hasn’t improved over time. We can create great systems/models that produce outputs that “look” good but do not accurately reflect reality. The need for Deming’s comment is now greater than ever; however, it continues to be ignored.

How AI Observability Detects Model Drift Early

Model drift is sneaky. It slips in without warning, and before you know it, your model is making choices based on outdated information.

AI observability detects model drift early by continuously monitoring model inputs, outputs, and performance signals in real time, allowing teams to identify changes in data patterns or model behavior before they significantly impact results.

Instead of relying on periodic evaluations or static dashboards, observability systems track live telemetry from AI models in production. This includes metrics such as prediction distributions, feature shifts, output confidence scores, and user interaction patterns. When these signals start deviating from the model’s baseline behavior, the system flags potential drift.

Modern AI observability platforms, including Lumenova AI, provide this continuous visibility so teams can detect drift early and respond before performance degrades.

5 Top Features to Look For in an AI Observability Platform

To effectively monitor models in production, organizations should look for platforms that offer a combination of continuous monitoring, evaluation capabilities, governance support, and safeguards for modern AI systems.

The following features represent the core capabilities of a robust AI observability platform.

1. Continuous Monitoring & Observability

To spot drift, you need to see how your models act all the time. You want to know the second something changes, not when a customer complains or during an audit.

2. Evaluation Engine

Checking now and then isn’t going to cut it. A solid grading system checks on many things, like fairness, how well it works, security, and data quality. This way, problems get noticed before they cause trouble.

3. AI Inventory

You can’t watch what you don’t know. A list that shows all your AI systems gives everyone an idea of what’s out there, what it does, and where things might be going wrong.

4. Automated Risk & Compliance Workflows

Drift isn’t just a tech problem. It can cause issues with rules and how people see you. Automatic checks that follow rules, like the EU AI Act, help you stay on top of things as your models change.

5. Guardrails for Generative AI

With GenAI, drift might show up as responses that don’t make sense or are even bad. Safety keeps things where they should be, which lets teams use it without worry.

AI Observability for Agentic AI: Monitoring Reasoning Loops in 2026

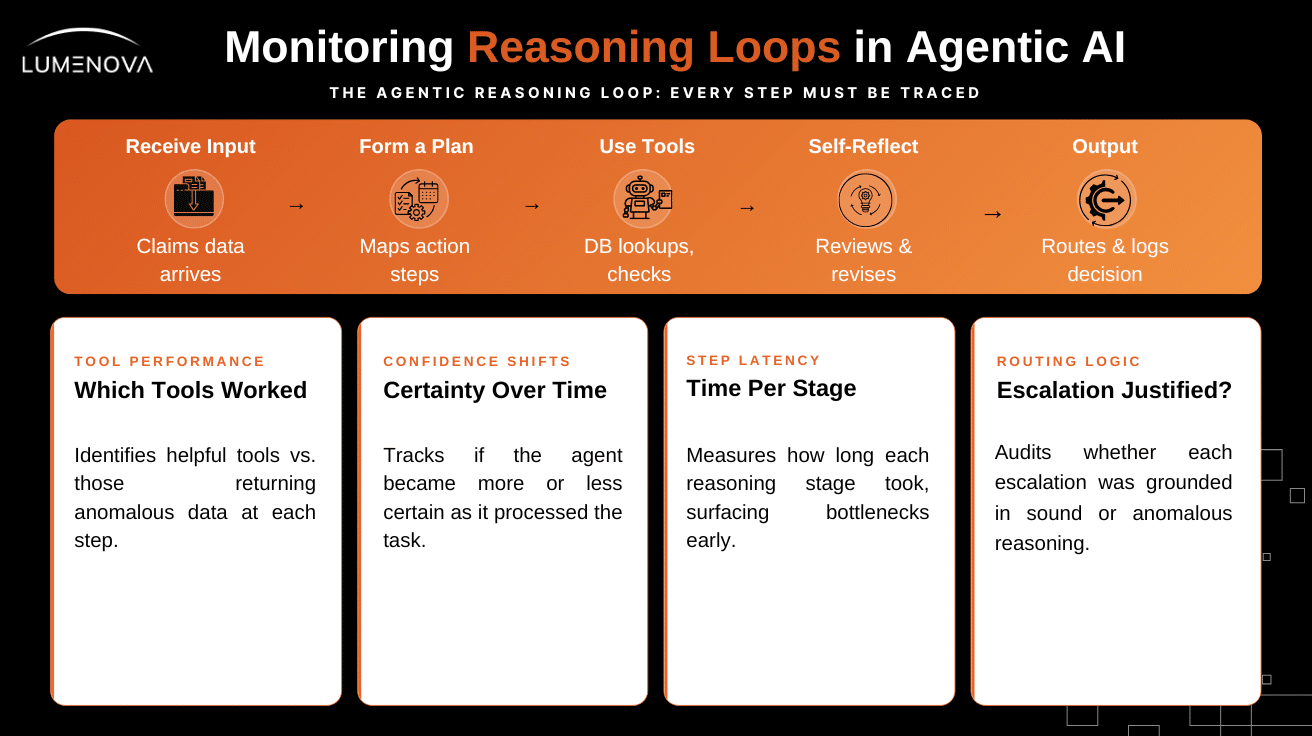

The rise of agentic AI has totally switched up the game for AI observability. Back in 2024, watching a model meant just checking what went in and what came out. Now, in 2026, we’re watching a system that takes something in, figures out a plan, uses different tools, thinks about what it’s doing, changes its plan if needed, and finally spits out an answer, often after a bunch of tries.

Each of those tries could mess things up. But old-school monitoring doesn’t even see them.

How to Monitor Reasoning Loops in Autonomous AI Agents

To watch agents properly, we need to trace how they think. That means grabbing not just what goes in and out, but every step along the way, like which tools they use and how they critique themselves. It’s way tougher than just watching inputs and outputs, but it’s the only way to get why an agent did what it did.

Real-World Example: AI Observability for Insurance Claims Agents

Imagine an AI that takes care of insurance claims. It reads the claim details, checks if anything seems like fraud, looks at previous claims, figures out the risk level, and then sends it to the correct person. Usually, a system just gets info and sends something out after. But with a good system, you can see:

- Which tools were helpful or not

- How long did each step took

- If the AI became more or less sure as it processed

- If sending it to a specific person made sense

- If any tool gave strange data

That’s the difference between an AI you can count on and one that’s just acceptable.

Why AI Observability Is Now the Foundation for Trustworthy AI

Deming’s point was ultimately simple: You can’t improve what you can’t measure. You can’t measure what you can’t observe. In the context of production AI systems in 2026, this has never been truer.

Models change. Data changes. The relationship between features and outcomes changes as the world evolves. Without proper observability, organizations only discover problems through costly business incidents and lost trust.

A strong AI observability platform completely flips this situation. Teams can detect model drift in hours instead of quarters, receive rich forensic alerts, provide clear explainability to every stakeholder, and fully trace the reasoning of even the most complex agentic systems.

The most successful organizations in 2026 will be those that treat AI observability as a core capability rather than an afterthought. Book your demo with Lumenova AI today and take the first step toward production-grade, trustworthy AI.

Frequently Asked Questions

AI observability is the practice of continuously monitoring and explaining how AI models behave in production. It collects real-time telemetry such as token usage, data, and model drift signals, response quality metrics, and reasoning traces. This visibility helps teams understand, debug, and improve AI systems as they operate in real-world environments.

AI observability improves model performance tracking by replacing periodic audits with continuous monitoring and automated alerts. By collecting real-time telemetry, teams can detect model drift, investigate anomalies, and identify performance issues early, allowing them to fix problems before they impact users or business outcomes.

Traditional monitoring was designed for deterministic software systems that either run or fail. Modern AI systems are probabilistic and evolve, meaning performance can degrade gradually without obvious system errors. AI observability provides deeper insight into model behavior, allowing teams to detect drift, reasoning issues, and performance changes that standard monitoring tools often miss.

For agentic AI systems, observability traces the entire reasoning process of an AI agent. This includes planning steps, tool usage, intermediate reasoning, and final outputs. By capturing these traces, teams can understand why an agent made a decision and identify failures at the reasoning or workflow level.

AI observability supports compliance by creating transparent audit trails, explainability records, and automated alerts for model drift or performance issues. These capabilities help organizations meet governance requirements under frameworks such as the EU AI Act while also improving accountability and trust in AI-driven decisions.