March 19, 2026

Why Enterprise-Grade AI Platforms with Model Version Control Are the Antidote to Model Debt

Contents

Key Takeaways for CISOs

- Model debt is not an IT problem. It is a governance problem, and it compounds silently until it becomes a regulatory or financial crisis.

- When an AI agent or model causes damage, the liability lands on the enterprise.

- Legacy audit frameworks were not built for probabilistic AI. Real-time model versioning and immutable audit trails are now the baseline that regulators and insurers expect.

- A versioned Model Registry eliminates zombie models and duplicate training runs, the two most expensive direct symptoms of model debt.

- One-click rollback reduces mean time to recovery from days to minutes, directly protecting revenue before the board ever hears about the problem.

Glossary of Key Terms Used in this Article

A few definitions worth having in mind:

- Enterprise-Grade AI Platform with Model Version Control: A RAI platform that automatically tracks, versions, and governs every AI model in production, capturing training data, decisions, and approvals in an immutable, auditable record.

- Model Debt: Undocumented, unversioned AI models running in production that nobody can confidently audit, update, or retire.

- Model Registry: A searchable catalogue of every model ever trained: performance, cost, and deployment status visible at a glance.

- Zombie Model: A live production model with no owner, no monitoring, and no documented purpose.

- Immutable Manifest: A machine-readable passport for every model (weights, data, hyperparameters, approvals) used to recreate the exact environment automatically.

How Model Debt Shows Up in Enterprises

If there is one reason enterprise-grade AI platforms with model version control have become non-negotiable for the modern C-suite, it is model debt (AI technical debt, or AI-driven technical debt). And the truly unsettling part? For many organizations, this remains invisible until real harm has already occurred.

A regulator shows up with questions nobody can answer. A CFO stares at a seven-figure loss tied to a model that should have been retired months ago. And somewhere in production right now, a black box nobody remembers deploying is still making decisions that affect real customers and real money.

Now, model debt does not happen because the AI is bad. It happens because nobody is tracking it properly. It arises when an organization ends up operating a growing stack of models in production with no transparent record of the data used to train them, the hyperparameters applied, or even the individuals who approved their deployment in the first place. Every sprint that passes without addressing that makes the whole environment more fragile. Harder to audit. Harder to debug. And scaling it? Well, good luck with that.

Here is the good news, though. The problem is rarely the models themselves: it is how they are being managed. That is precisely what we are getting into today: what model debt is actually costing your organization right now, and why platform-level version control is the structural fix that changes everything.

What Is Model Debt, and Why Is It Getting Worse

Model debt is the accumulation of undocumented, unversioned, and unmonitored AI models running in production that an organization cannot confidently audit, update, or retire.

AI model debt, also known as AI technical debt, encompasses the hidden long-term costs and maintenance challenges that arise when AI systems are rushed into deployment at the expense of data quality, governance, and sustainability. While similar to traditional software technical debt, it is often more complex to manage because AI relies on dynamic data that can drift and models that can become unpredictable over time.

How Model Debt Builds Up

Model debt doesn’t usually come all at once. A data scientist ships a model that works, the notebook never gets saved, the training data gets overwritten, and the hyperparameters live in a Slack message from nine months ago. Do that across a team of ten people over eighteen months, and you have a production environment nobody can explain to a regulator, a CFO, or a new hire.

Gartner found that by 2025, 85 percent of AI initiatives were expected to produce flawed results, with poor model governance sitting at the center of most of those failures. The issue is rarely the AI itself: it is the lack of control over it.

To understand how governance gaps compound over time at scale, see our deep dive on scalable Model Risk Management for complex enterprises.

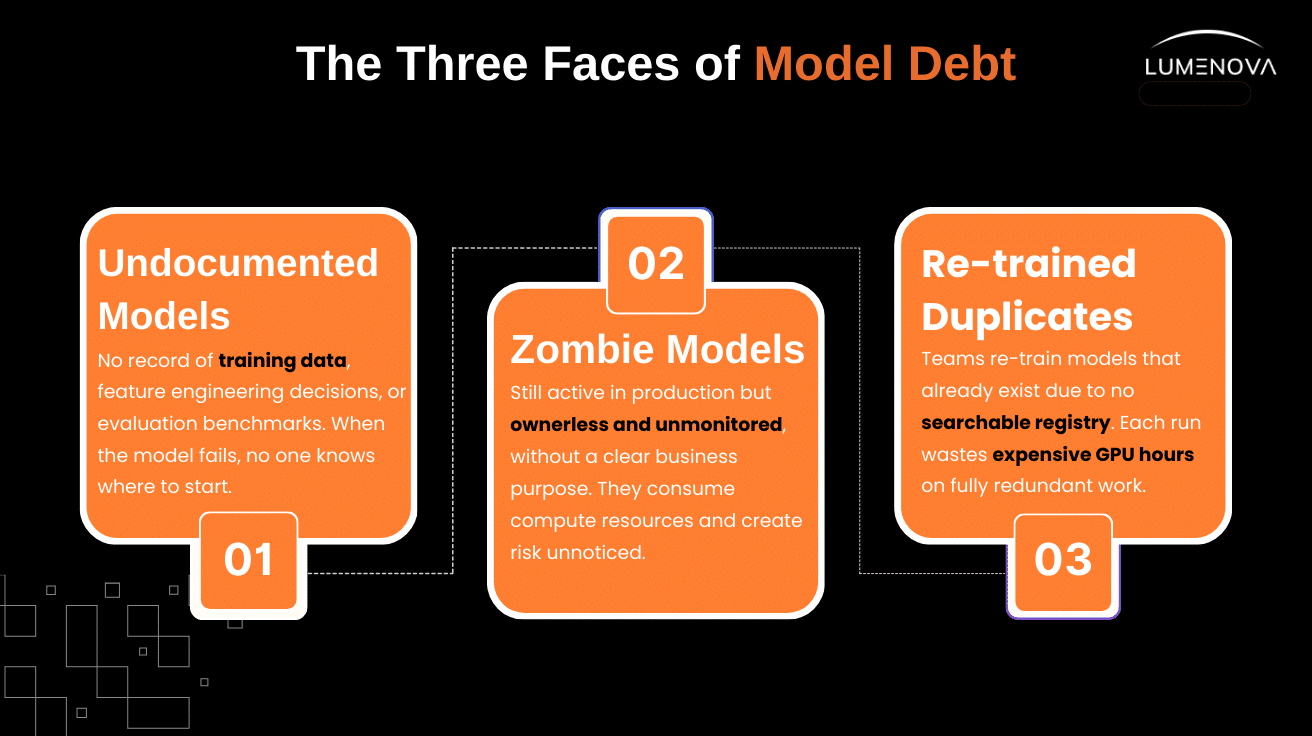

The Three Faces of Model Debt

And here is where the real damage gets specific. Model debt is not one thing going wrong, but three things going wrong.

- Undocumented models have no training data record, no feature engineering log, and no evaluation benchmark. When they fail (and they will), nobody knows where to begin.

- Zombie models are technically still active in production but have no owner, no AI monitoring, and no documented business purpose. They silently consume GPU compute and generate compliance risk while nobody notices. You probably have some right now.

- Re-trained duplicates happen when teams rebuild models that already exist, simply because there is no searchable registry to check first. Every redundant training run burns real GPU hours. And high-performance GPU computing, folks, is not cheap.

The Save Point Philosophy: What Model Version Control Actually Does

There are two ways to run AI models in production. The first is to ship fast, skip the documentation, and hope nothing breaks. The second is to have an enterprise-grade AI platform with model version control that captures every state of every model, every time something changes. Needless to say, the second one is the right way to do it. That said, most organizations are still doing the first, and paying for it in ways that show up on the balance sheet.

So what does model version control actually do? Well, that is precisely what we are getting into here. Every time a data scientist runs an experiment, updates a training pipeline, or pushes a deployment, the platform takes a complete, immutable snapshot (model weights, hyperparameters, training data lineage, evaluation metrics, and who approved the change). Think of it as a Save Point for your AI. Not just the model itself. Everything that produced it.

This is also why the connection between version control and AI observability across the full model lifecycle is so critical: the snapshot is only valuable if the monitoring layer can consume it and act on it.

The Risk of Running Without It

And look, this is the part that should genuinely concern every executive in the room. Without model version control, a single library update (something as routine as a dependency bump) can cause a model to behave completely differently in production.

A minor shift in training data distribution can quietly bleed accuracy by double digits before any monitoring system catches it. This is the exact failure mode we explore in our guide to detecting and managing model drift. And when the regression is finally discovered, the last known good state of that model may not exist anywhere at all. Not in a system. Not in a file. Just in someone’s memory… someone who, frankly, probably has twelve other things on their plate.

The Solution: Branch, Experiment, Rollback

Here is what having the right platform actually gives you. With enterprise-grade AI platforms offering model version control, teams can branch off a verified production model and run experiments in complete isolation, the same concept as a Git branch in software development, just built for AI instead of code. If the new iteration fails evaluation or behaves unpredictably in staging, one click restores the last known good configuration: no manual rebuild, no production incident, no emergency call at 2 am.

That single capability (to rollback in seconds instead of days) is precisely what separates AI teams that ship with confidence from those that ship carefully and still end up in damage control.

Fragmented vs. Version-Controlled: A Side-by-Side Comparison

Sometimes the clearest way to understand what model version control actually fixes is to see both worlds next to each other.

| Fragmented Workflow (Before) | Version-Controlled Workflow (After) |

| Experiments run in local notebooks; results shared informally over Slack. | Every experiment is committed to the platform with full parameter and data lineage logging. |

| Production models are hard-coded API endpoints with no traceable origin. | Each deployment is linked to an immutable manifest: weights, data snapshot, hyperparameters, and approver. |

| A failed deployment requires manual debugging across multiple repos and environments. | Failed deployments trigger a one-click rollback to the last validated version in seconds. |

| “Who trained this model?” requires an email chain and two meetings. | Provenance is queryable in the Model Registry: owner, date, data version, and performance metrics. |

| Teams retrain existing models because the registry does not exist. | Teams find the closest stable version and perform cost-effective fine-tuning. |

| Zombie models accumulate silently. Nobody knows what is live. | Every model in production has a tagged owner, monitoring policy, and scheduled review date. |

| Regulatory audits require weeks of manual documentation reconstruction. | Audit trails are auto-generated. Model history is queryable and exportable in hours. |

This kind of structured governance is no longer optional. As we outline in what effective generative AI governance looks like in 2026, institutional clients now treat AI governance maturity like a credit rating, and proof of model lineage is table stakes during due diligence. For a broader framework on operationalizing this, our piece on implementing responsible AI governance walks through the practical steps in detail.

How Model Version Control Bridges Research and Production

There is a moment that happens in nearly every enterprise AI program. The model is finished. The data scientist closes the notebook, packages a Python script and a requirements.txt file, and hands it across. On the other side, a DevOps engineer receives it. They have no record of which dataset version was used. No preprocessing log. No explanation for the hyperparameter choices. The knowledge that built the model stayed with the person who built it, and that person has already moved on.

The Fragmented Handoff Problem

Research lives in notebooks. Production lives in hard-coded APIs. Between the two lives a gap that is invisible until something breaks, and by the time it breaks, reconstructing what went wrong can take longer than rebuilding from scratch. In an environment where a single training run costs tens of thousands of dollars, and regulators are demanding explainability, that gap is not a workflow inefficiency. It is a liability. Quiet, compounding, and entirely preventable.

This handoff failure is also one of the primary reasons enterprise AI governance needs explainability baked in from the start, because if nobody can reconstruct what the model learned or why, they certainly cannot explain it under audit.

The Immutable Manifest: A Passport for Your Model

Enterprise-grade AI platforms with model version control replace that broken handoff with an immutable manifest. A machine-readable record capturing every ingredient that went into building the model. Automatically. Every time. Without anyone having to remember.

When the model moves from research to staging to production, the platform uses that manifest to recreate the exact same environment. What was tested is precisely what gets deployed. The model that lived in a Jupyter notebook arrives in a production Kubernetes cluster identical byte-for-byte. No drift. No gap, no silence before something breaks.

And if the model is ever retired (voluntarily or under regulatory pressure), that manifest remains as a permanent, auditable record of every decision that produced it.

The CFO’s Case for Model Version Control: Turning GPU Waste into Competitive Advantage

Training large AI models is not cheap. And for organizations running dozens of experiments per quarter, the financial risk of unmanaged model workflows is not a rounding error. It is a line item. One that most CFOs have not been handed a report on yet.

The Waste: Accidental Re-Training

It happens like this: a data scientist starts a new project, searches the shared drive, finds nothing, and rebuilds a model from scratch. A model a colleague finished six months ago and simply forgot to document. The work was done. The record did not exist. So the organization paid for it twice.

The Gain: A Searchable Model Registry

A Model Registry gives organizations a searchable catalogue of every model ever trained (performance metrics, training data version, compute cost, deployment status), all visible before anyone spins up a single GPU.

Instead of rebuilding from scratch, teams find the closest stable version. Update only what needs to change. The cost difference is significant. The performance outcome is comparable.

And the downstream impact touches every number a CFO tracks. Duplicate training runs disappear. Experimentation accelerates because failure is no longer expensive. Compliance documentation that used to take weeks gets generated automatically. And when a production model degrades, recovery is measured in minutes rather than days.

Conclusion

At some point, every executive running an AI program gets asked a version of the same four questions:

- What is actually running in production right now?

- Why was it trained the way it was?

- Who signed off on it?

- If it breaks tomorrow, what is the plan?

Simple questions, really. The kind that should take sixty seconds to answer. And yet, for most organizations operating without enterprise-grade AI platforms with model version control, the answers are buried somewhere between a notebook nobody saved, a Slack message from eight months ago, and the institutional memory of an engineer who quietly left the company in Q3. That, simply put, is model debt at its most expensive and most avoidable.

Here is what is worth understanding about the organizations pulling ahead in AI right now. It is not necessarily the ones with the most sophisticated models. It is the ones who built the infrastructure to govern them properly. Fast deployment without version control is not a competitive advantage. It is a deferred crisis, and deferred crises, as any CFO will tell you, always cost more to resolve than they would have cost to prevent.

Enterprise-grade AI platforms with model version control are what closes that gap structurally. And that is exactly what our enterprise-grade AI platform with model version control, Lumenova AI, was built for: giving data science teams the freedom to move fast while giving the C-suite the governance, audit trails, and bounded autonomy that regulators and insurers now require as standard. Not bolted-together tools. One platform. Built for enterprises that understand that in 2026, how you govern your AI matters as much as how you build it.

Frequently Asked Questions

Model debt is what happens when your organization has AI models running in production that nobody can fully account for: no record of the training data, no documented decisions, no clear owner. Teams prioritized shipping over tracking, and now you have a black box that a regulator, a CFO, or a new hire cannot explain. It compounds quietly until something breaks at the worst possible moment.

Model version control is the practice of tracking every iteration of a machine learning model, including its weights, training data, hyperparameters, and evaluation results, in a structured, searchable system. Think of it as a Git for your AI models, except it captures everything, not just code. If something goes wrong in production, you can roll back to the last known good state in minutes, not days.

The short answer: your team stops flying blind. Full reproducibility of any model state means no more “it worked on my machine” failures. One-click rollback means a bad deployment doesn’t become a production crisis. A searchable Model Registry kills duplicate training runs. And auto-generated lineage cuts regulatory audit prep from weeks to hours. It’s the difference between shipping with confidence and shipping and hoping.

A zombie model is a live production model with no owner, no monitoring, and no documented reason for existing. It’s consuming GPU compute, generating compliance exposure, and nobody on your team knows it’s there or what it’s doing. It’s one of the most direct and expensive symptoms of unmanaged model debt, and it’s far more common than most engineering leaders realize.