April 2, 2026

The 5 Layers of the Essential AI Control Stack You Can’t Afford to Skip

Contents

AI Control Tools = the systems, processes, and frameworks that help organizations monitor, manage, and govern AI models throughout their lifecycle.

They ensure AI behaves reliably, stays compliant with regulations, mitigates risks, and aligns with business objectives.

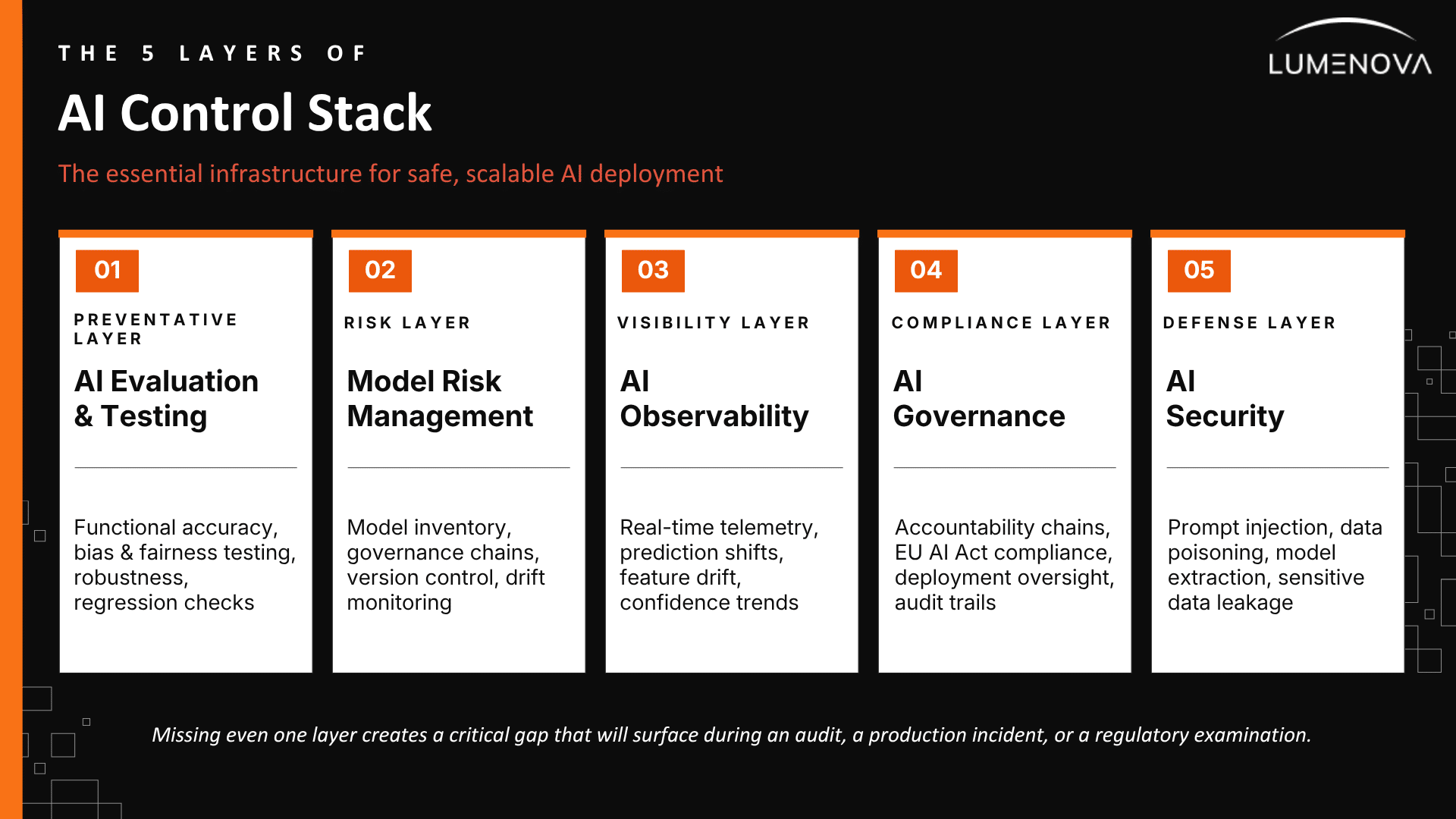

These tools cover areas such as evaluation, model risk management, observability, governance, and security, forming a layered “control stack” that prevents failures in production and supports safe, scalable AI deployment.

Key Takeaways for Enterprise Leaders

- AI control tools are the foundation for moving from experimental AI projects to scalable, production-ready systems. For organizations operating in the EU and globally, they are essential for safe, compliant, and confident AI deployment.

- A robust AI control framework consists of five critical layers: AI evaluation, model risk management, observability, governance, and security. These components work together to reduce operational risk. Missing even one layer can create serious compliance gaps, especially under increasing regulatory scrutiny.

- Implementing AI controls early in the machine learning lifecycle significantly reduces long-term costs. Compared to fixing failures after deployment, early integration helps organizations avoid expensive incidents, model failures, and regulatory penalties.

- The integration of high-risk AI systems remains a key operational focus. While regulatory frameworks like the EU AI Act continue to evolve, the current emphasis is on aligning governance and transparency protocols with organizational standards. Adopting a structured AI governance framework ensures consistent internal performance and prepares the organization for future scaling.

The High Cost of Deferral: What Happens When You Skip AI Observability

Development cycles often culminate in production deployments where telemetry alignment remains a deferred priority. The model demonstrated functional accuracy during initial testing, the demonstration was successful, and the pressure of the deadline was undeniable. Consequently, systems go live with a fragile commitment to “add observability later” while the validation record languishes in an ephemeral notebook environment. In reality, “later” is the primary residence of AI risk.

AI control tools are the underlying infrastructure that transforms “later” into a successful regulatory examination, a resilient production environment, or a defensible financial conversation. When properly integrated, these tools represent a strategic investment in sustainable development speed.

Our guide outlines the five interdependent layers of the essential AI control stack and defines the specific phase of the ML lifecycle where each mechanism must be embedded.

The 5 Layers of the Essential AI Control Stack You Can’t Afford to Skip

The AI control stack is best understood as a series of interdependent layers, each purpose-built to address a distinct failure mode. These layers comprise the essential infrastructure for sustainable development; neglect any single layer, and you create a critical gap that will inevitably surface during an audit, a production incident, or a regulatory examination.

Layer 1: AI Evaluation & Testing – The Most Cost-Effective Stage to Fix Failure

We call this: the “preventative maintenance” layer. (If we’re going to spend hours finding a problem, it might as well be when the stakes are low, right?) Evaluation exists mainly to find flaws way before production or (God forbid) a regulatory exam. But let’s be honest: most teams underinvest here. Simply checking a score on a test set is like checking if our tires are round. We need to look under the hood and assess the real challenges before we declare this model ready to rock.

- Functional accuracy on unseen data: Generalization to data the model has never encountered is the metric that matters; performance on training data is a starting point.

- Bias and fairness testing: A model can achieve high accuracy while producing outputs that are systematically skewed against protected groups. In regulated industries, that is not a model performance problem. It is a legal one. Understanding the types of AI bias that affect different use cases is the starting point for knowing what to test for.

- Robustness testing: What happens when inputs are adversarial, out of distribution, or simply unusual? Models that collapse on edge cases are liabilities that have not been triggered yet.

- Regression testing: Incremental updates accumulate. Regression testing ensures that improvements in one area have not quietly degraded performance somewhere else.

The team validating the model should be separate from the team that built it. Validating a model requires an independent review and formal approval from the second line.

The practical upshot of getting evaluation right: deployment decisions become defensible. “We have documented evidence that it is ready, produced by an independent function.” That distinction matters when a regulator asks for the validation record.

For a detailed look at what independent validation should actually test for, see our guide to fairness and bias mitigation strategies.

Layer 2: Model Risk Management – The Key to Unlocking Enterprise AI At Scale

Model risk management is the discipline of tracking, measuring, and governing the risk that AI models create while they are running in production. Most organizations find their primary challenge in aligning their policies with operational reality.

A risk framework that lives in a PDF is not model risk management. It is a compliance artifact. Real model risk management has three components that are harder to document than they are to do:

- The first one is a complete AI model inventory: every model in production, proprietary, and vendor-provided, with documented ownership, purpose, data inputs, and risk tier.

The inventory is complete when the answer to “what is actually running right now?” is available within seconds. It is the foundation for everything else in the control stack.

- The second one is documented governance chains. This involves actual accountability chains running from individual model owners up to board-level oversight, with escalation protocols that specify what triggers a review and who makes the call.

- The third one is model version control: every iteration of every model (weights, training data lineage, hyperparameters, evaluation results, who approved what) captured automatically in a searchable system. This allows for maintaining model behavior in production and identifying when changes occur, or restoring to the last known good state. This is what enterprise-grade AI platforms with model version control are built to solve.

Running alongside all three is model drift monitoring: the continuous measurement of whether the predictive performance of the model remains stable as the real world aligns with the conditions under which it was trained. Drift is a subtle and significant failure mode in production AI. It remains hidden until someone notices the business impact.

Layer 3: AI Observability – See What Standard Monitoring Misses

You improve what you measure. You measure what you observe. This is as true for AI systems as it was for manufacturing quality in 1950, and organizations should prioritize figuring this out quickly.

AI observability is the practice of continuously monitoring and explaining model behavior in production through real-time telemetry that surfaces anomalies as they happen.

Observability is its own layer, distinct from monitoring, because AI models have unique failure modes. A model stays active while producing suboptimal outputs, generating predictions that look plausible while accuracy erodes or bias compounds silently. Infrastructure monitoring misses that; observability catches it.

The signals that matter in practice:

- Prediction distribution shifts: When the range or frequency of model outputs starts diverging from baseline, something has changed.

- Feature drift: Statistical properties of input data diverging from the training distribution reliably predict that output quality will follow.

- Confidence score trends: A model that was producing confident predictions and is now hedging has noticed something is different, even if you have not.

- Response quality and downstream business outcomes: Especially critical for generative AI, where token-level signals can look fine while the actual outputs are getting worse.

In 2026, observability has to extend to agentic AI systems (models that do not generate a single output but execute multi-step reasoning loops). Tracing which tools were used, how confidence shifted across steps, and whether routing decisions were sound requires a fundamentally different approach than input-output monitoring.

Layer 4: AI Governance – Turning Compliance from a Burden into an Advantage

Governance is the layer that ties the others together and is frequently understood as being about accountability. It is about documented accountability: who owns which models, who has authority to approve deployment, what the escalation path looks like when something goes wrong, and how the organization can demonstrate all of that on demand.

The compliance dimension is real and worth naming directly. The EU AI Act makes several governance requirements mandatory for high-risk AI models (risk assessments, transparency obligations, human oversight documentation), and enforcement timelines are not hypothetical. For organizations building or deploying AI that falls under its scope, a functioning AI governance framework is a legal prerequisite. Not a best practice. A requirement.

But the more immediate operational argument for governance is simpler: the AI programs that scale successfully are the ones where decisions about model deployment, retirement, and oversight are made deliberately and documented early in the process.

The question worth running annually: “Could we produce all the relevant documentation within thirty days if a regulator asked?” If the answer is no, that gap is where examination problems live.

Layer 5: AI Security – Defend Against Injection, Poisoning, and IP Theft

AI systems introduce attack surfaces that require new security designs. The threat model is unique, and the control tooling needs to reflect that.

- Prompt injection: An attacker crafts an input that causes the model to execute unintended instructions. In agentic AI systems that can take real-world actions, the blast radius of a successful injection is substantially larger than in a traditional large language model context.

- Training data poisoning: Deliberate corruption of training data to introduce biases or backdoors that affect production behavior. This is particularly relevant for models trained on third-party or web-scraped data pipelines.

- Model extraction: Systematic querying of a production model to reconstruct its behavior through its API. It is an IP risk as well as a security risk for organizations whose models represent competitive assets.

- Sensitive data leakage: Models trained on confidential data can surface that data through inference. It’s of particular concern for models trained on customer records, proprietary documents, or regulated information.

The security layer of the control stack includes input validation, output filtering for sensitive data, rate limiting, and anomaly detection on API usage patterns, and (for GenAI specifically) guardrails that enforce content policy regardless of prompt construction. Security is a primary consideration in AI systems. It is the constraint that shapes how the system gets built.

The AI Control Tools Timing Guide: When to Integrate Each Layer for Maximum Safety

Knowing what each layer does is straightforward. The more consequential question is when each control mechanism gets embedded. Controls should be integrated early, as initial decisions determine whether the controls will actually work.

Control tooling introduced at every phase of the lifecycle costs a fraction of what it costs to retrofit after a production incident. The architecture looks like this:

Phase 1: Development – Three Steps Before the First Training Run

The control mechanisms that matter during development are the ones that govern how the model gets built. Three things should be in place before a training run begins:

- Version control from day one. Every experiment (data version, hyperparameter configuration, training run, evaluation result) gets logged automatically in a system. This ensures any future investigation of the model’s behavior is possible.

- Bias constraints in the data pipeline. If the training data has demographic imbalances, addressing them after the model is trained is harder and less reliable than addressing them in data preparation. The mitigation strategies that actually work are structural, not cosmetic.

- Risk tier assignment before training begins. The intended use case should be assessed for potential harm before spending GPU cycles. A fraud detection model affecting claims processing and a recommendation model suggesting article topics belong to different risk tiers. They should be developed under specific controls.

Phase 2: Testing – Why Independence is the ONLY Path to Defensible Deployment

Testing is where the independence principle is required. The team validating the model should be separate from the team that built it, and validation must be conducted internally regardless of vendor assurances.

Evaluation at this phase covers functional accuracy on unseen data, bias analysis across protected attributes, robustness testing on adversarial and edge-case inputs, and regression testing against previously validated behavior. Each test produces documentation that should be retained to ensure it exists for any potential inquiry.

Security testing belongs at this phase too, as a systematic assessment of the attack surfaces specific to the architecture and deployment context of this model. For LLM-based models, that means prompt injection testing. For models trained on sensitive data, it means targeted probes for memorization and leakage.

The output of the testing phase is a validation record: documented tests, results, thresholds, and approvals. This is a complete record, which is exactly what an examiner is looking for.

Phase 3: Deployment – The Critical 6-Point Go-Live Checklist

Deployment is where governance controls move from design to production. Before any model goes live in a regulated decision context, a pre-deployment checklist should confirm that:

- ☐ Model is in the inventory with owner, risk tier, purpose, and data inputs documented.

- ☐ Independent validation record is reviewed and approved by the appropriate committee.

- ☐ Vendor contracts (where applicable) are confirmed to include audit rights.

- ☐ Consumer or downstream stakeholder notice process is confirmed for the relevant use case.

- ☐ Rollback capability is confirmed (the ability to revert to the last known good state in minutes, not days).

- ☐ Observability thresholds are configured, and alerts are routed to people with the authority to act on them.

A rollback that takes minutes instead of days is the difference between a recoverable deployment incident and a production crisis. With model version control, a failed deployment can be restored quickly while the problem is diagnosed. One-click rollback is what allows AI teams to ship confidently and manage damage effectively.

Phase 4: Monitoring – Continuous Oversight and Scheduled Drift Assessments

Monitoring is a continuous operational state. Teams should treat it as a constant activity to identify problems through tooling before they impact the business.

The observability layer configured at deployment should include defined thresholds for the signals that matter: prediction distribution shifts, feature drift metrics, output quality scores, confidence trend changes, and downstream business outcomes. When those thresholds are crossed, alerts should route automatically to people with the context and authority to act.

Scheduled drift assessments sit alongside event-triggered alerts. A model may experience gradual degradation that requires periodic assessments with documented results and explicit re-validation triggers to maintain monitoring credibility.

For high-risk models, monitoring should also include a regular review of whether the model remains appropriate for production. Models often stay live due to organizational inertia. An inventory with tagged owners and scheduled review dates makes retirement a deliberate decision. Our piece on scalable model risk management walks through how to structure that review cadence in practice.

Conclusion: Stop Accumulating Hidden Debt, the Stack Is the Strategy

The AI programs that fail tend to fail subtly, with a slow accumulation of hidden debt: undocumented models, unmonitored drift, ungoverned third-party tools, and untested failure modes. The consequences appear as regulatory findings, production incidents, or consumer harm events that become visible only after the impact has occurred.

The five layers described here are the infrastructure that makes AI development sustainable. Built in from the start, they reduce the cost of every deployment, every update, and every incident that may occur along the way. Early implementation is more cost-effective and efficient than later retrofitting.

Your organization requires this infrastructure. If you are deploying AI in decisions that affect real people or real money, you need it. Consider whether you have built it yet, and identify the current cost of any existing gaps.

Ready to Ship AI Confidently? Book Your Audit-Ready Stack Demo with Lumenova AI

The Lumenova AI RAI platform integrates model inventory, version control, bias testing, observability, and audit-ready governance into a single system, ensuring your team has a unified system, eliminating the need for point solutions. Book your discovery call today!

Additionally, Lumenova AI also provides a dedicated AI glossary that bridges the terminology gaps between AI governance, risk management, and responsible AI. This resource is purpose-built to ensure the clarity required during internal audits and regulatory examinations, serving as the definitive reference for every technical and legal term used throughout the machine learning lifecycle.

Frequently Asked Questions

AI control tools are the technical and governance mechanisms organizations use to manage, monitor, and govern AI models across their full lifecycle. The category includes evaluation and testing frameworks, model risk management infrastructure, observability platforms, governance tooling, and AI-specific security controls. Together they form the stack that allows organizations to deploy AI with confidence and to demonstrate that confidence to regulators, boards, and customers when asked.

Traditional monitoring was built for deterministic software: systems are either active or inactive. AI systems fail probabilistically and gradually. A model can stay active while its accuracy erodes, its outputs drift, or its predictions become systematically biased, and these failures occur without triggering standard uptime alerts. AI observability is purpose-built to catch the subtle patterns missed by standard monitoring.

At every phase. Version control and risk tier assessment belong in development. Independent validation and security testing belong in the testing phase. Governance approvals and rollback capability belong in deployment. Continuous observability and scheduled drift evaluation belong in monitoring. Control tooling should be embedded throughout to shape decisions from the start.

The EU AI Act requires organizations deploying high-risk AI systems to implement risk assessments, maintain technical documentation, ensure human oversight, and provide transparency to users. These requirements map directly to the governance, evaluation, and observability layers of the control stack. Organizations with existing governance infrastructure will find compliance straightforward. Others should prepare for the associated costs.

Monitoring typically checks whether a system is active. Observability goes deeper: it surfaces why model behavior is changing. For AI systems, that distinction matters because degradation appears subtle. It looks like a slowly eroding prediction quality, a shift in output distributions, or a model that responds with decreasing accuracy. Observability catches those patterns before they become incidents.