April 28, 2026

Dear Enterprises, Are Your Governance Platforms Designed by Generative AI?

Contents

This article has the potential to turn into a strongly controversial opinion. But so is deploying a high-risk system with zero oversight: a contentious subject, frankly. The era of managerial choice is now simply dead. We are staring down the final, structural shield against systemic AI failure: AI governance platforms. Any organization deploying AI at scale knows they invite regulatory execution if they fail. Yet the market remains saturated with pretenders and compliance dashboards, which are merely solutions masquerading as something real. Let’s call a spade a spade: executives continue to fall hook, line, and sinker for these hollow, performative, analyst-curated fantasies.

Rapid Adoption and the Unchecked Frenzy: Why We Need Real AI Governance Platforms

We have witnessed a chaotic, unchecked, dangerous frenzy in enterprise AI adoption. According to the Workplace Intelligence 2025 Enterprise AI Adoption Survey, 72% of C-suite leaders report their company has faced at least one significant challenge in their generative AI adoption journey, and 95% admit their adoption process needs improvement. Control is absent.

This rapid, widespread integration of algorithmic decision-making across every business unit presents a scale of operational risk previously unimaginable. The decision to adopt AI is made, but many are barking up the wrong tree if they think a simple spreadsheet is enough. The operational question now stands: can you control the machine after you plug it in? The enterprise has already committed to the digital revolution.

Our task involves ensuring that revolution remains internal, which is precisely where robust AI governance platforms enter the fray.

Regulatory Pressure and Risk Exposure: The Structural Imperative for AI Governance Platforms

The regulatory climate has shifted from quiet consultation to explicit, mandatory law. The EU AI Act and NIST AI RMF are requirements; they are the law.

Governance is an immediate, brutal, operational imperative. Every new model deployment increases regulatory surface area. Every unmonitored model presents an immediate financial and reputational liability. This is the new financial truth of the digital age: a balance sheet now carries the explicit threat of algorithmic failure.

To keep everything on the up and up, we must realize the penalty for noncompliance makes a strong case for structural competence via legitimate governance solutions.

Choosing AI Governance Platforms to Manage Risk, Compliance, and Oversight

We all know that the implementation of a responsible AI framework is a certain enterprise necessity. Regulators made that decision for us. Competence is the only remaining variable in choosing AI governance platforms that actually work. You need a system capable of transforming manual reviewers into an automated, auditable, scalable entity.

These platforms represent the crucial enforcement layer between strategic intent and deployed reality. They secure AI accountability across the entire operational lifespan, ensuring your claims of compliance are truthful.

The Challenge: Evaluation Criteria for AI Governance Platforms Are Often Unclear

The market is saturated. Vendors loudly announce “full coverage.” They offer vague promises of “responsible innovation.” We find that the evaluation criteria for distinguishing structural capability from marketing theater are woefully underdeveloped. Buyers often rely on checklists which obscure the operational depth required.

We must demand an examination of criteria that separate the truly capable from the hopelessly theatrical, the genuine from the fraudulent, and the survival-ready from the obsolete. At the end of the day, the correct choice in AI governance platforms determines enterprise survival.

Why AI Governance Platforms Are Critical: The Existential Necessity of Control

Sometimes, a governing framework is far more than written documentation. It is re-iterated. It rises from the rust and ruin of spectacular failures to become one of the most cited documents in regulatory history. Models degrade. Training data drifts into obsolescence. Regulatory compliance frameworks shift. Silence is a precursor to an operational collapse that lawyers will dissect for decades, so maintain skepticism regarding model stability without AI governance platforms.

Organizations with deployed models feel the brutal outcome of absent governance: fraud detection models underperform; credit-scoring models accumulate systemic bias; generative AI assistants produce non-compliant outputs. We are faced with dynamic AI systems and static oversight structures. The operational gap is a dangerous, widening chasm that requires the intervention of advanced AI platforms.

The EU AI Act codified this reality. Article 9 mandates a risk management system for high-risk AI. Article 17 requires a quality management system. These apply throughout the entire operational lifespan. Organizations treating compliance as a mere documentation sprint will fail the only test that matters: the audit. A governance platform covering only deployment-time checks is a glorified onboarding checklist. We look for continuous, automated enforcement provided by elite AI governance platforms.

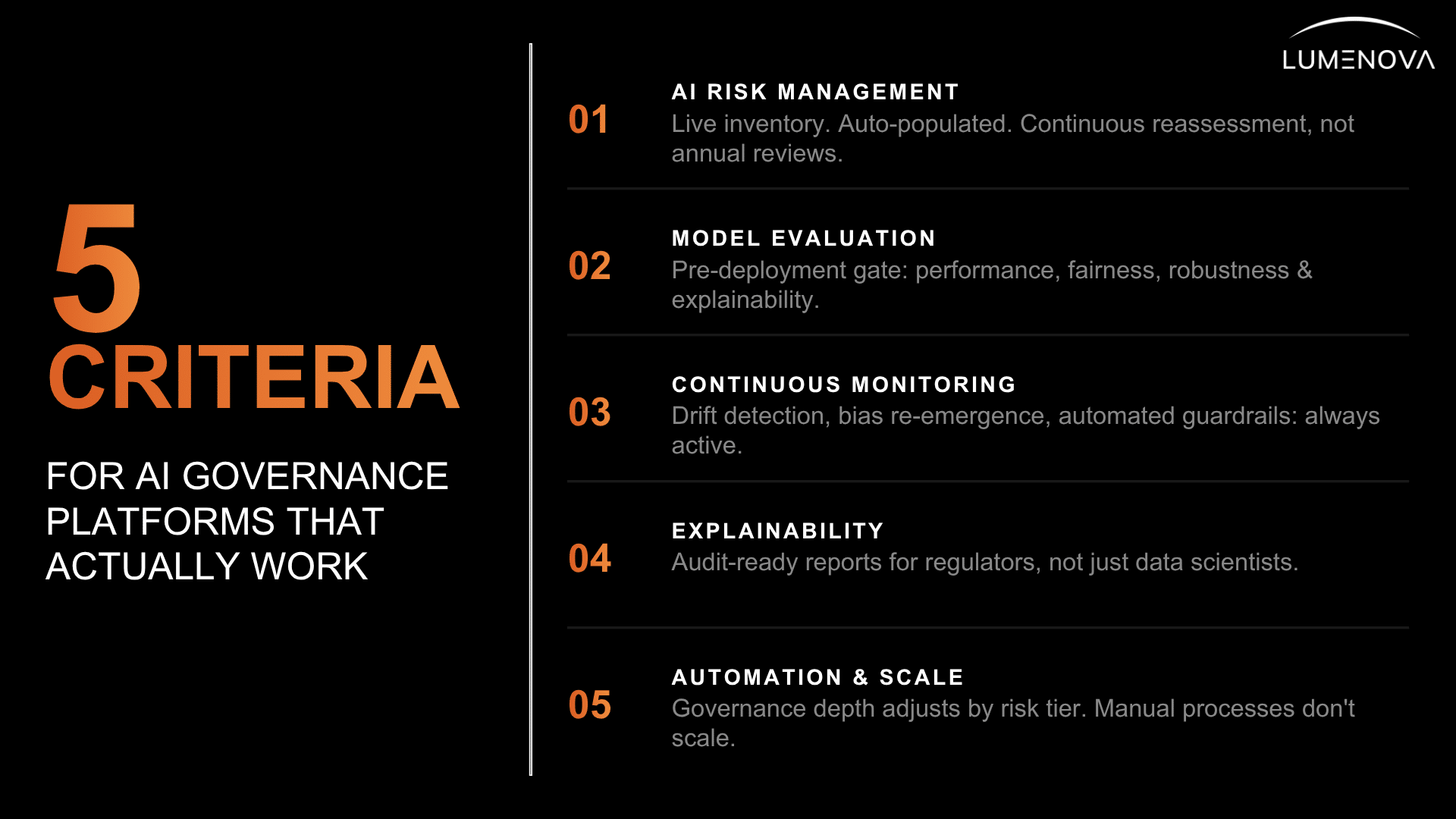

5 Key Evaluation Criteria for AI Governance Platforms: The Uncompromising Changelog

1. Comprehensive AI Risk Management

A platform earns consideration only if it handles AI risk management: identification, assessment, prioritization, and mitigation. These must function as automated processes tied directly to operational context. This is the only way to scale. Manual entry introduces the very failure mode the platform claims to solve; manual tracking is insufficient in the age of AI governance platforms.

The minimum acceptable standard is a live AI inventory that auto-populates. This inventory must be the singular source of truth for the entire organization. Modern AI governance platforms must apply configurable risk tiers. A back-office summarization tool carries a vastly different risk exposure than a customer-facing large language model that influences credit decisions. Risk assessments must be continuous, re-triggered by model changes, and independent of a static, annual review schedule. We require a system that understands the variable geometry of organizational risk.

2. Model Evaluation and Testing

Pre-deployment evaluation is the absolute gate. Legitimate AI governance platforms must support model evaluation across both traditional machine learning and generative AI models, including RAG architectures. We use rhetorical escalation here: the evaluation suite must cover performance, fairness, robustness, and explainability. Anything less is a technical abdication; such omissions create significant hazards.

Hallucination detection and output safety testing are mandatory for generative models. These checks are essential for enterprise deployment. Model risk management in complex enterprises requires this level of granular, uncompromising visibility. The system must support over 200 quantitative and qualitative metrics to truly claim rigor. We must avoid superficial checks. We require a forensic depth of analysis. The system must prove trustworthiness before it touches the user base.

3. Continuous Monitoring and Alerts

Continuous monitoring and alerts separate a governance platform from being a glorified document. Pre-deployment evaluation without production monitoring is a guarantee of failure. It leaves compliance exposure at a maximum. This structural oversight deficiency ensures eventual compliance violations.

The monitoring layer covers model drift detection, performance degradation, and bias re-emergence. Alerts must trigger a verifiable, automated response. Output behavior shifts after a model update; this is a universal truth. Automated AI guardrails are a requirement for current deployment realities. Superior AI governance platforms must function as a relentless, autonomous audit layer, always active.

4. Explainability and Transparency

The core demand is simple. Regulators, auditors, and stakeholders require explanations. Effective AI governance platforms must satisfy all audiences, delivering content in distinct formats.

Explainable AI (XAI) surfaces how inputs influence model outputs. Think of XAI as the transparent hood on a supercar; you see the engine working. These explainability reports must be audit-ready and automatically generated for compliance with DORA and SR 11-7 (the primary, sector-specific financial regulations in the EU and US). These sector-specific requirements are the non-negotiables for all AI governance platforms.

Technical explainability without business-level translation is a clear bottleneck. We require AI governance platforms where risk and compliance teams can interpret every governance report without needing a data scientist in the room. This ensures organizational fluency in risk, rather than leaving the compliance team isolated.

5. Automation and Scalability

Manual governance fails. This is a matter of statistics rather than budget. Systems dealing with 200 models or tightening regulatory pressure require immediate, structural automation. Automation means two things: risk assessments triggered by model changes and documentation generated as a byproduct of operational activity. It is an integrated compliance step. Governance runs in parallel with every stage of the AI lifecycle.

Scalability requires the system to manage its own workload. Governance depth must adjust automatically by risk tier. A low-risk model requires automated documentation. A high-risk model demands continuous performance monitoring and detailed XAI reporting. The platform determines the required oversight automatically. Automation ensures this demand is met without scaling the compliance team at the same rate, avoiding overextension.

Integration with Existing Systems

Capable platforms fail if they sit outside the deployment pipeline. Integration determines whether governance executes, simple as that. The AI governance platforms must be architecturally embedded. Select a tool that communicates with your tech stack to avoid sequence errors.

Compatibility with MLOps Pipelines and Data Platforms: The Seamless Handoff for AI Governance Platforms

A governance platform connects directly to MLOps infrastructure, model registries, and data pipelines. Manual processes are skipped when the release pressure is high. The integration must be so deep that governance controls are enforced at the deployment step. It’s mandatory for AI governance platforms to handle traditional machine learning, generative AI, and AI agents without separate workflows. Siloed strategies create organizational fragmentation and exploitability.

Governance decisions require access to production data distributions. Platforms relying on sample data operate blindly in the real world. AI governance platforms must ingest production data to perform meaningful drift detection, bias measurement, and continuous validation. Anything less is a calculated ignorance of deployed reality. The handoff must be seamless, auditable, and non-bypassable.

Questions Buyers Should Ask AI Governance Platform Vendors

We are always suspicious when vendors announce a new platform based on “full coverage.” They frequently withhold information regarding what was omitted. These decisions might very well be dictated by internal engineering needs and PR timelines. We provide the following interrogation framework for evaluating AI governance platforms. We are looking for structural truth, rather than a brochure, so let’s get down to business:

How Do These AI Governance Platforms Support End-to-End Governance?

This is the most important question. Push vendors to demonstrate coverage across all five AI lifecycle stages in their AI governance platforms: design and risk classification, development, pre-deployment evaluation, production monitoring, and retirement. Platforms that cover only one or two stages require manual coordination with other tools to fill the gaps. This reintroduces the operational failure the platform was meant to close; you’re essentially back at the beginning.

Ask to see the live AI inventory in action. The disclosure will differ from its sibling in a sales deck. If the inventory requires manual input to stay current, the rest of the platform’s governance guarantees rest on a foundation that will drift from reality within weeks of deployment. Demand a demonstration of governance enforced at the retirement stage. The ability to decommission models safely is a crucial risk management component for AI governance platforms.

What Types of Models and Use Cases Are Supported by Your AI Governance Platforms?

You need a specific, uncompromising answer. Ask the vendor to show evaluation and monitoring for a traditional machine learning model and an LLM-powered RAG in the same platform session. The AI governance platforms must prove their multimodal fluidity. Many platforms were built for one or the other. Very few handle both at the evaluation depth that regulated industries require.

For generative AI, we must confirm that the platform evaluates hallucination rates, prompt injection vulnerability, and output safety. These are the dominant risk categories in enterprise deployments right now. We need proof AI governance platforms handle the unique, deterministic risks of foundation models. Generic compliance checks are merely a small part.

How Do AI Governance Platforms Handle Regulatory Compliance and Audits?

Be wary of a company makes umbrella claims, such as “full compliance.” Require specificity on the regulatory frameworks the AI governance platforms map to: the EU AI Act, the NIST AI RMF, ISO 42001, and sector-specific requirements like SR 11-7. Ask whether the mapping is automatic and continuous or periodic and manual. Automatic is the only acceptable answer for organizations under active regulatory scrutiny.

On audit readiness, demand that the vendor generate an audit report during the demo using real platform data. Audit-ready documentation that requires a separate manual compilation process is incomplete. We demand one-click audit evidence, generated instantly from AI governance platforms’ continuous monitoring logs.

What Level of Automation Is Provided in AI Governance Platforms?

The critical distinction is between automation that reduces work and automation that eliminates failure modes. Ask specifically: Are risk assessments in your AI governance platforms triggered automatically by model changes? Is documentation generated as a byproduct of normal governance activity? Are compliance maps updated as regulatory frameworks evolve? These are the critical automations that determine whether your governance posture remains current without constant manual intervention.

Apply the responsible AI innovation best practices test: If a key team member left tomorrow, would the governance program continue to function at the same level? If the answer is affirmative, the platform’s automation is structural. Structural automation in AI governance platforms must remove human fallibility from the critical path of compliance.

How Do AI Governance Platforms Scale Across Multiple Teams and Business Units?

AI governance platforms fail at scale for two reasons: they lack the technical capacity to handle model volume, or they lack the organizational design to handle team diversity. Evaluate both.

On the technical side, ask about performance under load: what is the monitoring latency at 100 models? At 500? Demand hard numbers. On the organizational side, ask how AI governance platforms handle role-based access controls, cross-functional review workflows, and the handoff between data science, risk, and compliance teams. Enterprise responsible governance requires shared visibility across all three functions. Separate dashboards requiring manual reconciliation are a recipe for fragmentation and failure.

The Standard of Authenticity for AI Governance Platforms

We all know that most platforms are partial solutions dressed as complete ones. But here is the truth: they leave buyers to manually bridge the gaps. This is a structural cost hidden in the contract, and it’s a difficult reality to accept.

The evaluation standard is a binary question: do your AI governance platforms run governance continuously, automatically, across the full AI lifecycle, for both traditional machine learning and generative AI models, with audit-ready output? Any false condition indicates a compliance tool requiring a separate governance program. This is the only question that matters for long-term organizational safety.

Lumenova AI meets this standard. We combine a live AI inventory, automated risk assessment, deep model evaluation, continuous production monitoring, and compliance mapping into a single connected system. Winners in the world of AI governance platforms possess the most automated, continuous, and auditable enforcement of policies. We offer structural truth, rather than a brochure.

If you are actively evaluating AI governance platforms and want to see these capabilities demonstrated against your actual model portfolio, we suggest you request a personalized AI demo and bring your hardest evaluation questions. Let us sit down and marvel at the capacity of a platform that actually delivers what others only promise.

Frequently Asked Questions

AI governance platforms are software solutions that manage the policies, controls, evaluation, monitoring, and compliance functions required to operate AI systems responsibly at scale. They automate the lifecycle controls spanning from model design and risk classification through production monitoring and regulatory reporting. Different from point tools that address one governance function, full AI governance platforms cover the entire AI lifecycle in a single connected system.

Regulatory mandates, reputational exposure, and the operational reality of model drift make manual governance structurally unsustainable. Anything above a trivial model count is a known failure point. The EU AI Act imposes continuous risk management obligations on high-risk AI. NIST AI RMF and ISO 42001 set clear expectations for documentation and accountability. Without AI governance platforms to automate these requirements, organizations face a permanent compliance backlog that grows with every new model deployment.

Evaluate AI governance platforms on five dimensions: full lifecycle coverage from inventory to retirement, model type breadth covering both traditional machine learning and generative AI, automation depth where risk assessments are triggered by model changes, regulatory coverage spanning the EU AI Act, NIST AI RMF, and ISO 42001, and integration that is architecturally embedded in your deployment pipeline.

Explainable AI (XAI) is the technical foundation that allows governance platforms to satisfy both regulators and internal business stakeholders. Without explainability, a model is a black box, and governance amounts to documentation theater. XAI techniques surface the causal relationship between inputs and outputs so that risk and compliance teams can verify a model is operating within its intended parameters and so that auditors can confirm adherence to regulatory standards requiring transparency.

Multimodal support is a specific, uncompromising requirement. A legitimate, complete system must provide forensic evaluation, continuous monitoring, and automated compliance for both traditional machine learning and generative AI models, including RAG architectures, within a single connected environment. We demand this unified approach; managing these models in isolation is a technical abdication that creates siloed strategies, organizational fragmentation, and dangerous exploitability.