April 9, 2026

Automated AI Governance Platforms: What They Are and Why Every AI-Deploying Organization Needs One

Contents

What This 2026 Guide Establishes on Automated AI Governance

- The necessary shift from periodic, human-led review to continuous, system-driven control as AI deployment scales.

- A clear, architectural breakdown of the four non-negotiable components of a complete automated platform: AI Inventory, Automated Risk Assessment, Offline Evaluation, and Continuous Monitoring.

- The structural reason why manual oversight fails to meet the explicit, continuous control mandates of regulatory frameworks like the EU AI Act and NIST AI RMF.

The conventional narrative frames AI governance as a purely administrative exercise, an expensive, necessary evil that follows innovation. This reading fundamentally misses the profound shift now underway, which is that governance has stopped being an optional compliance task and has become a mandatory operational layer.

Enterprises that have scaled AI deployment from scattered pilots to production fleets (running dozens of models across multiple business units and overlapping regulatory jurisdictions) have effectively signed a death warrant for the manual oversight model, as the governance infrastructure sufficient for ten models collapses utterly when faced with three hundred.

This velocity renders manual oversight structurally inadequate, and automated AI governance platforms have emerged to close this structural gap, not by merely documenting compliance after the fact, but by replacing periodic, human-led reviews with continuous, system-driven controls that operate across every stage of the AI lifecycle.

Lumenova AI’s automated AI governance platform manages the full AI lifecycle, from model inventory to risk classification to continuous monitoring, providing organizations with persistent, real-time control over every AI system in production.

What Is an Automated AI Governance Platform?

An automated AI governance platform is software designed to manage the complete lifecycle of AI systems, methodically covering inventory tracking, risk assessment, pre-deployment evaluation, compliance documentation, and continuous monitoring, all without relying on manual processes or quarterly reviews. It provides organizations with real-time, actionable visibility and control over every AI model operating in production.

The category is consistently and incorrectly conflated with adjacent, insufficient tools. MLOps platforms exist to track model performance metrics; data catalogs focus purely on data lineage. General GRC platforms are document repositories for policy texts, including risk management documentation. An automated AI governance platform is tasked with something entirely distinct: it maintains active, automated oversight of AI systems from their initial design concept through retirement, applying necessary governance controls at each stage rather than simply documenting compliance that happened somewhere else in the pipeline.

AI inventory management forms the non-negotiable foundation of this system. The platform is compelled to maintain a live, constantly updating register of every AI model the organization operates, detailing version control, ownership, training data lineage, deployment environment, intended use, and the critical regulatory classification. This registry updates automatically, in real time, the moment models are deployed, updated, or retired.

From this perpetual inventory, the platform is structured to execute risk assessments. It applies a defined, non-optional framework, whether the EU AI Act, NIST AI RMF, ISO 42001, or a stringent custom internal standard, to classify each system, generate all necessary documentation, and flag only those elements that specifically require human review. Crucially, these automated risk assessments are triggered by material model changes, not by a calendar that an analyst remembered to set three months prior.

The difference between a live, automated governance system and a well-maintained document repository is the precise difference between knowing what your AI systems are doing right now and knowing what they were doing the last time someone manually ran a check.

Why Do Organizations Need Automated AI Governance?

The need for automated AI governance is no longer a strategic debate, but a structural necessity, since the scale and velocity of modern AI deployment have rendered periodic manual oversight an exercise in professional negligence. Furthermore, emerging regulatory frameworks, including the EU AI Act and NIST AI RMF mandate documented, continuous governance that legacy manual review cycles simply cannot satisfy.

To understand how to move from policy to operational execution, see our guide on Implementing Responsible AI Governance in Modern Enterprises.

a) Regulatory Requirements Are Now Explicit and Unavoidable

The EU AI Act does not offer suggestions; it assigns strict risk tiers to AI systems and mandates documented, continuous management for all high-risk classifications. Article 9 explicitly demands a risk management system, and Article 17 requires a quality management system. Both must apply continuously, throughout the operational lifespan of the system. True compliance is only a documentation exercise for organizations that have first automated the documentation process itself.

The NIST AI RMF, ISO 42001, and increasingly stringent sector-specific requirements in financial services, healthcare, and critical infrastructure add parallel, compounding obligations. The combined documentation burden across all these frameworks represents precisely the kind of workload that an automated platform absorbs as a byproduct of normal operation, and the kind of workload that guarantees a manual process falls permanently behind the compliance timeline.

b) Model Drift Operates in Silence

AI models, by their statistical nature, degrade; this is not a rare failure mode but the fundamental default behavior of a deployed model left unmonitored. Training data shifts, input distributions change, and the statistical relationships the model learned at training time inevitably stop reflecting the real-world patterns it encounters in production.

Across dozens of deployments, the pattern remains consistent: a model performs flawlessly at launch, accumulates silent drift over weeks, and by the time a human reviewer finally flags degraded outputs via a quarterly report, the model has already been making measurably worse, potentially harmful decisions for months. Continuous monitoring is structurally engineered to catch drift the moment it begins to surface. Quarterly audits, on the other hand, are designed to catch failure only after it has caused quantifiable harm.

c) AI Deployment Velocity Has Long Outpaced the Human Committee

McKinsey’s survey establishes the scale of this shift, noting that 78+ percent of organizations now utilize generative AI in at least one business function: a significant escalation from the 55 percent reported only a year prior. As these enterprises scale deployment via high-frequency Continuous Integration/Continuous Deployment (CI/CD) cadences, the manual oversight model collapses; governance processes reliant on human-scheduled reviews and committee sessions are, by design, relegated to the periphery of the operational pipeline.

An automated AI governance platform is, by necessity, designed to run inside the pipeline. Governance executes at deployment, at model update, and continuously in production, requiring no human intervention to trigger any of these essential regulatory steps.

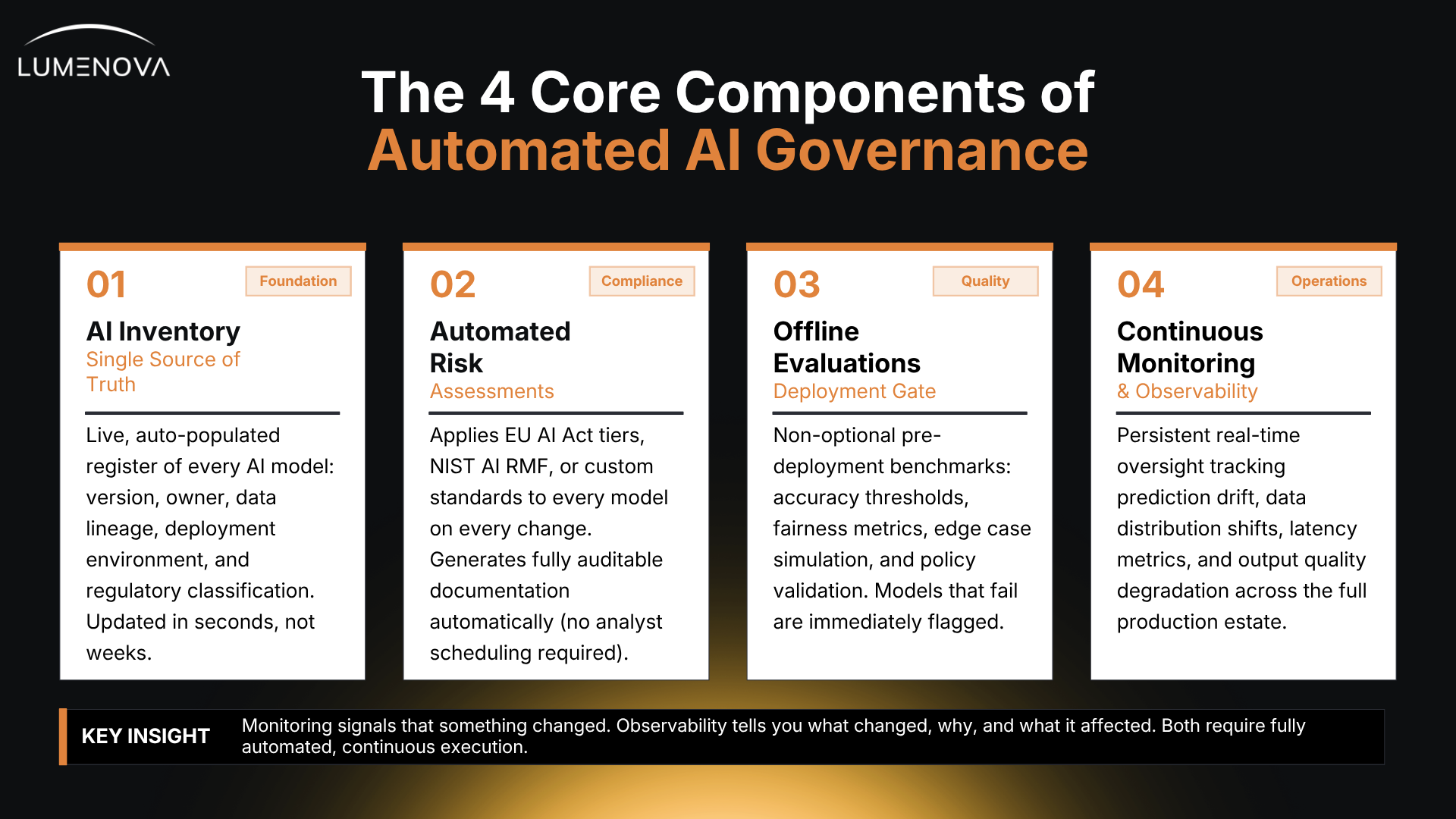

The 4 Core Components of an Automated AI Governance Architecture

A complete automated AI governance platform is defined by the non-optional integration of its core components, which must run as a single, connected system across the full AI lifecycle, rejecting the failure mode of isolated tools requiring manual coordination.

1. AI Inventory: The Single Source of Truth

The live AI inventory is the logical foundation upon which every single governance capability is constructed. It enforces a live, auto-populated, and complete register of every AI model and AI-enabled system the organization operates. An automated AI governance platform captures all mission-critical data, model version, owner, data lineage, deployment environment, intended use, and mandatory regulatory classification, and, crucially, it updates automatically as the model estate changes.

The operational difference between a live inventory and a human-maintained spreadsheet is the lag time between a model change and the governance record reflecting that change. In a live inventory, that lag is measured in seconds. In the spreadsheet model, it is measured by how long it takes a human analyst to remember to update it.

2. Automated Risk Assessments: Continuous, Not Calendar-Driven

Automated risk assessments are compelled to apply a defined risk framework to every model, every single time a model changes, entirely without a human analyst having to schedule a review. The platform classifies each system against the EU AI Act’s risk tiers, NIST AI RMF categories, or a custom internal standard, generating the required documentation and producing a fully auditable record of the assessment automatically.

This operational reality is what Article 9 of the EU AI Act actually demands: a risk management system that operates continuously, produces documented output, and covers the full operational life of the AI system. An automated platform produces that output as a fundamental function of its normal operation.

3. Offline Evaluations: The Non-Negotiable Deployment Gate

Before a model is allowed to reach production, the platform subjects it to non-optional, pre-defined benchmarks:

- accuracy thresholds

- fairness metrics

- output consistency testing

- edge case behavior simulation

- policy compliance validation

Models that meet the benchmark thresholds are automatically cleared for deployment. Models that fail are immediately flagged with the precise criteria that were not satisfied.

The evaluation result is logged automatically and versioned. When an incoming regulator or external auditor requests definitive evidence of pre-deployment testing, the documentation exists within the platform, timestamped and versioned, rather than being defensively assembled from scattered files after the fact.

4. Continuous Monitoring and Observability: Catching the Silent Shift

The observability function is structurally engineered to maintain persistent, real-time oversight across the entire production estate, methodically tracking prediction drift, data distribution shifts, latency metrics, and critical indicators of output quality degradation or bias.

The platform automates the governance layer that manual processes cannot sustain at enterprise scale, transforming full lifecycle AI control from an aspirational business goal into a technical, operational reality.

Monitoring merely signals that something has changed. Observability, the higher-level function, is what tells you what changed, why it changed, and what it immediately affected. Both capabilities are necessary, but neither works as a complete governance mechanism without continuous, fully automated execution.

Governance Must Run Parallel: The Flaw of the Afterthought Audit

An automated AI governance platform is structurally integrated across every stage of the AI lifecycle, running from the initial design and risk classification through development, pre-deployment evaluation, deployment, production monitoring, and eventual retirement. Governance, therefore, runs in parallel with the development process at each transition point, instead of being applied as a post-mortem audit.

Applying governance only at the point of deployment is, quite simply, compliance theater. The crucial, high-impact decisions that determine how an AI system behaves, what data it ingests, what high-stakes decisions it influences, and what material risks it carries are all made during the design and development phases. A governance platform that only enters the picture at release is merely auditing those core decisions after they have been functionally locked in.

Our RAI platform enters at the earliest stage: design. Before training even begins, the intended system is mandatorily classified against regulatory tiers. During development, policy guardrails are active and binding. At pre-deployment, non-negotiable offline evaluations gate the release. At deployment, the inventory updates automatically. In production, monitoring begins instantaneously. At retirement, the deprecation process is fully documented with a complete audit trail.

The platform features integrations with core tools, connecting the governance layer directly to the engineering tools that teams are already operating within. Governance runs inside the existing workflow, not as a parallel process that engineering teams are forced to manually feed.

Analytical Distinction: AI Governance vs. AI Risk Management

AI governance constitutes the full, expansive framework of policies, system controls, and accountability structures that collectively determine how AI systems are built, deployed, and managed across their entire operational lifecycle. AI risk management is a single, focused function operating within that larger framework, mainly concerned with identifying and methodically assessing the risks individual AI systems carry. To misunderstand this relationship is to misunderstand the whole premise: risk management, when isolated, lacks the structural accountability required for effective ownership, while governance, absent a risk mechanism, is merely a policy framework.

For a deeper dive into establishing the initial governance framework, see our article on How to Build an AI Use Policy to Safeguard Against AI Risk.

Organizations persistently and incorrectly treat risk management as the complete governance function. An isolated risk assessment is run, the resulting report is filed away, and the system is mistakenly deemed governed. That assessment answers only one narrow question: how risky is this model at this particular moment? Governance, however, answers a much broader, more existential question: how do we maintain total control over this model across its entire operational lifecycle as its inputs shift, as the model itself changes, and as the regulatory environment around it continues to accelerate and evolve.

Our platform’s AI risk management capability functions as one highly optimized capability within the broader governance architecture. The risk assessment feeds the mandatory inventory record, informs the necessary monitoring thresholds, and generates the compliance documentation required by regulation. It is a vital node within a connected system, and it is that connection that elevates it to governance rather than a one-time intellectual exercise.

The Decision Point: Choosing the Governance Architecture

Choosing an automated AI governance platform mandates evaluating lifecycle coverage as the absolute first priority. The correct platform handles inventory, risk assessment, pre-deployment evaluation, and continuous monitoring as a singular, connected system; it must integrate seamlessly with existing ML infrastructure, and it must generate compliance documentation that precisely aligns with the full spectrum of regulatory frameworks the organization faces.

The comprehensive evaluation must begin with the architecture, not a feature checklist; the relevant platform features are detailed in our guide Features You Need in an AI Governance Platform. The single relevant question is whether the platform governs the full AI lifecycle or whether it is limited to one stage. Platforms that cover only one stage well require manual integration with other tools to cover the rest of the mandate, and the coordination between those disparate tools reintroduces the manual work and the operational gap the platform was ostensibly purchased to close.

Four high-leverage questions immediately settle the evaluation:

– Does the platform maintain a live AI inventory or require manual updates?

– Does it run risk assessments automatically on model changes or only on a calendar schedule?

– Does it enforce deployment gating with offline evaluations, or does it sit uselessly outside the deployment pipeline?

– Does it monitor production continuously or only generate static reports when a human requests them?

The Governance Infrastructure That Enterprise AI Now Requires

Automated AI governance platforms are, without debate, the new operational standard for any organization serious about AI deployment at scale. The regulatory calendar is locked in. The EU AI Act’s enforcement dates are established and approaching quickly. NIST AI RMF has moved from voluntary guidance to a non-negotiable baseline expectation across federal contractors and their entire supply chains. Organizations that continue to treat governance merely as a documentation exercise rather than a binding operational function will inevitably encounter the brutal gap between those two things when enforcement arrives.

The analytical case for automated AI governance is straightforward and unnegotiable: the sheer scale and velocity of modern AI deployment necessitate a system that runs governance continuously, automatically, and across the full lifecycle. An automated AI governance platform provides that system today. The inventory is live. The risk assessments are automatic. The monitoring is continuous. The documentation is an inescapable byproduct of governance that is actually happening, not a set of files assembled defensively to suggest that it did.

Governing AI manually at enterprise scale produces the comforting appearance of oversight, and the guaranteed, costly reality of a systemic gap. Lumenova AI closes the gap completely.

Frequently Asked Questions

An automated AI governance platform is software that manages the complete lifecycle of AI systems, covering inventory, risk assessment, pre-deployment evaluation, continuous monitoring, and compliance documentation, without requiring manual processes to initiate or record each step. It gives organizations persistent, real-time control over every AI system they operate.

The platform maintains a live inventory of all AI systems, applies a defined risk framework to classify each one automatically, runs evaluations before deployment, monitors behavior in production continuously, and generates compliance documentation as a byproduct of normal operation. Human input governs decisions, not the workflow that produces information for those decisions.

AI governance is the full framework of policies, controls, and accountability structures that determine how AI systems are built, deployed, and managed across their operational life. AI risk management is one function within that framework, focused on identifying and assessing the risks individual systems carry. Risk management without governance has no owner. Governance without risk management has no mechanism.

Because the scale and velocity of modern AI deployment have made periodic manual oversight structurally inadequate. Organizations running dozens or hundreds of AI models on CI/CD cadences require governance that runs in parallel with deployment. Regulations, including the EU AI Act, require documented, continuous control that manual review cycles cannot satisfy.

A complete automated AI governance platform includes AI inventory management, automated risk assessments, pre-deployment offline evaluations, continuous monitoring and observability, and compliance documentation generation. These capabilities run as a connected system across the full AI lifecycle rather than as isolated tools requiring manual coordination between them.

Automated AI governance platforms support compliance with the EU AI Act, including the Article 9 risk management system requirement and the Article 17 quality management documentation requirement. They also map to NIST AI RMF, ISO 42001, and sector-specific frameworks in financial services, healthcare, and critical infrastructure.

Evaluate lifecycle coverage first. The platform should maintain a live inventory, run risk assessments automatically on model changes, gate deployment with evaluations, and monitor production continuously. Platforms that cover only one of these stages require manual coordination with other tools to cover the rest, which reintroduces the operational gap the platform was meant to close.