Contents

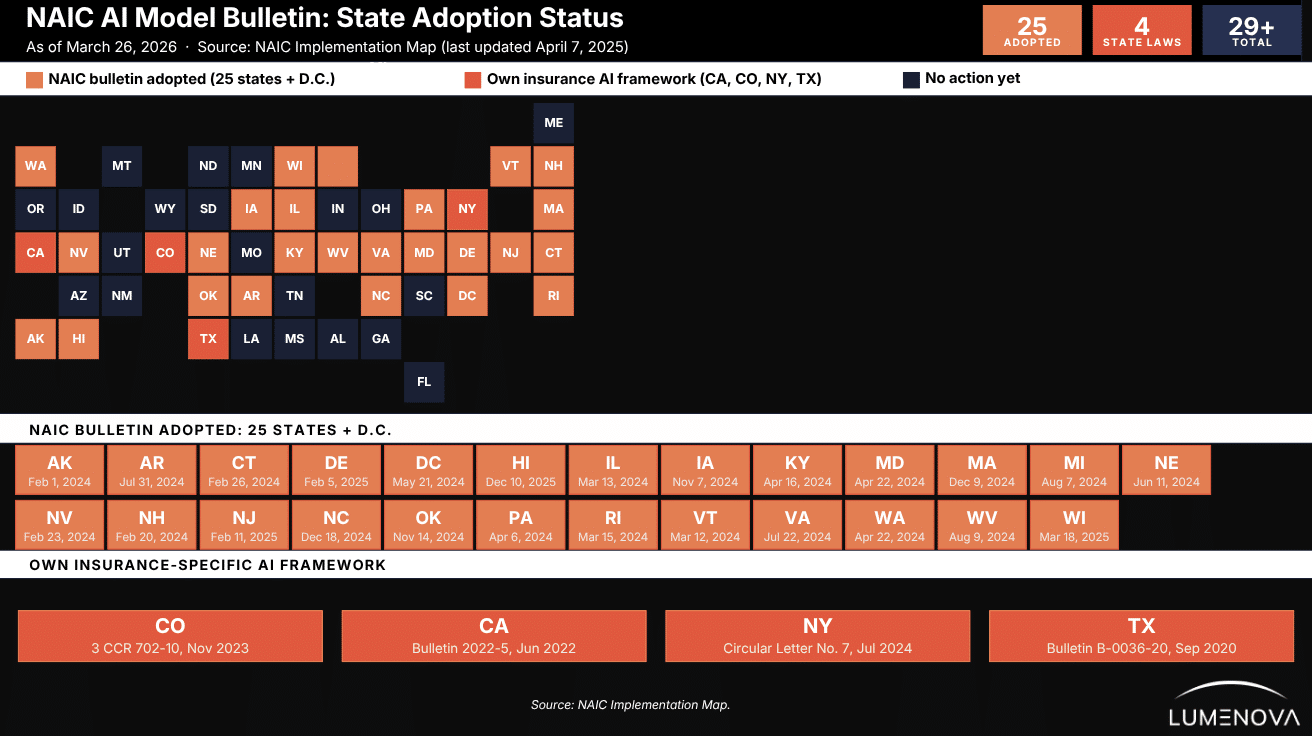

Twenty-five states and Washington, D.C., have now adopted the NAIC AI Model Bulletin. The NAIC Executive Committee and Plenary formally adopted the bulletin on December 4, 2023. By April 2024, 11 jurisdictions had issued their own bulletins: Alaska, Connecticut, Illinois, Kentucky, Maryland, Nevada, New Hampshire, Pennsylvania, Rhode Island, Vermont, and Washington. By March 2025, that number had more than doubled, reaching 25 adopting jurisdictions by early 2026.

Here is what the NAIC AI Model bulletin actually does: it reminds every licensed insurer (in the opening paragraph, no preamble) that decisions impacting consumers made using AI systems must comply with all applicable insurance laws and regulations. No carve-out for algorithms. No exemption for third-party tools. The legal standard does not change. What changes is how thoroughly you have to prove compliance with it.

(If you work in a regulated industry, you may already be familiar with the broader challenge covered in our article on 3 Hidden Risks of AI for Banks and Insurance Companies.)

We know what compliance teams are thinking right now, and heard both of these exits:

– “We use a third-party tool; that’s their liability.”

– “Our models passed validation, we’re covered.”

The bulletin was written specifically to close both of those doors. Section 4 (the examination section) lists exactly what lands on a regulator’s desk when they come knocking. It is the most important part of the document. It is also reliably the section most people skip.

We would like to advise against skipping it. Therefore, below, we go through the bulletin section by section: main ideas, what it defines, what it requires from your governance program, and what examiners will actually ask for.

Key Takeaways for Insurers

- The AIS Program (Artificial Intelligence System Program) is the written governance framework that the bulletin requires every insurer to build, implement, and maintain. It is the core compliance deliverable: everything else in the bulletin flows from it.

- If you are licensed in any of the 25 adopting states, the NAIC AI Model Bulletin should represent a concern.

- The legal standard has not changed. What changed is how thoroughly you have to prove you meet it.

- Using a third-party AI tool does not transfer your liability. The vendor’s compliance problem is yours.

- Passed validation is not enough. It must be independent, documented, and done before deployment.

- The higher the stakes of the AI decision, the stricter the controls required.

- Section 4 is what regulators actually use. Build so you can hand over that documentation in 30 days.

- Telling consumers when AI affects their coverage or claims is a requirement, not a courtesy.

What Is the NAIC AI Model Bulletin?

The bulletin is addressed directly to all insurers licensed to do business in any adopting state, not to IT departments or data science teams; to insurers, as regulated entities.

Structurally, the document has four sections: an introduction with legislative authority, definitions, regulatory guidance, AIS Program requirements, and examination expectations. That last section is what gives the bulletin its teeth. It tells you exactly what a regulator will ask for during a market conduct action. Understanding how to structure AI governance across your organization is what makes those examination conversations manageable. We will cover Section 4 in detail later in this guide.

Four states had already established their own insurance-specific AI frameworks before the NAIC bulletin landed:

- Texas (Bulletin B-0036-20, September 2020)

- California (Bulletin 2022-5, June 2022)

- Colorado (3 CCR 702-10, effective November 2023)

- New York (Insurance Circular Letter No. 1, January 2024)

The NAIC bulletin sits alongside those, not above them. If you operate in multiple states, the most stringent applicable standard governs.

Source: NAIC AI Model Bulletin Implementation Map, last updated March 3, 2026. The map reflects state action as of that date. Does not reflect a determination as to whether enacted legislation contains all elements of the model.

What the NAIC AI Model Bulletin does NOT do

It does not create new substantive law. It does not override state-specific regulations. And per Section 4, insurers can demonstrate compliance “through alternative means, including through practices that differ from those described in this bulletin.” The goal is accountability, not prescription. For a broader look at how the current landscape of AI regulations is shaping regulated industries, that context is worth keeping in mind.

What the Bulletin Covers and What It Does Not

Per Section 1.6 of the AIS Program guidelines, the bulletin applies across the full insurance lifecycle: product development and design, marketing, underwriting, rating and pricing, case management, claim administration, and fraud detection. That is broad by design. If an AI system touches a regulated insurance decision, it is in scope.

What it does not do is tell you exactly how to build your governance program. Section 3 sets out guidelines, not mandates. An insurer can adopt the NIST AI Risk Management Framework, incorporate existing ERM structures, or build something from scratch, as long as it addresses the required elements. Our guide to the pros and cons of implementing the NIST AI RMF walks through exactly what that foundation looks like in practice. (Reference: NAIC AI Model Bulletin, Section 3, Guideline 1.5)

Key Definitions (Straight From the Bulletin)

Section 2 of the bulletin defines nine terms. These matter because regulators will use them precisely during examinations. Here are the six you will encounter most often, with the bulletin’s exact framing:

| Term | Definition |

| AI System (Section 2) | A machine-based system that can, for a given set of objectives, generate outputs such as predictions, recommendations, content, or other outputs influencing decisions in real or virtual environments. Designed to operate with varying levels of autonomy. |

| Predictive Model (Section 2) | The mining of historic data using algorithms and/or machine learning to identify patterns and predict outcomes that can be used to make or support the making of decisions. Underwriting scores, fraud flags, and claims propensity models all fall here. |

| Adverse Consumer Outcome (Section 2) | A decision by an insurer, subject to regulatory standards, that adversely impacts the consumer in a manner that violates those standards. This is the outcome the entire AIS Program exists to prevent, and the trigger for regulatory examination. |

| Model Drift (Section 2) | The decay of a model’s predictive performance over time, arising from changes in the definitions, distributions, or statistical properties between training data and live deployment data. Section 3.3 (c) requires this to be measured and monitored. |

| Degree of Potential Harm (Section 2) | The severity of the adverse economic impact a consumer might experience from an Adverse Consumer Outcome. This is the scaling variable. The bulletin explicitly says controls must be “commensurate” with this degree. Higher harm potential requires stricter controls. |

| Third Party (Section 2) | Any organization other than the insurer providing services, data, or resources related to AI. Insurer liability for outcomes from third-party systems is not transferred by contract. The NAIC AI Model Bulletin makes this explicit in Sections 3 and 4. |

Why “Degree of Potential Harm” Is the Most Important Definition

The bulletin uses this term to calibrate everything. Section 3 states that controls and processes should be “commensurate with both the risk of Adverse Consumer Outcomes and the Degree of Potential Harm to Consumers.” A fraud detection model that flags claims for review carries different obligations than an underwriting model that denies coverage. Your AIS Program needs to reflect that difference explicitly.

For a deeper look at how algorithmic discrimination can result from uncalibrated controls, that glossary entry is a useful companion read.

What the AIS Program Must Actually Include

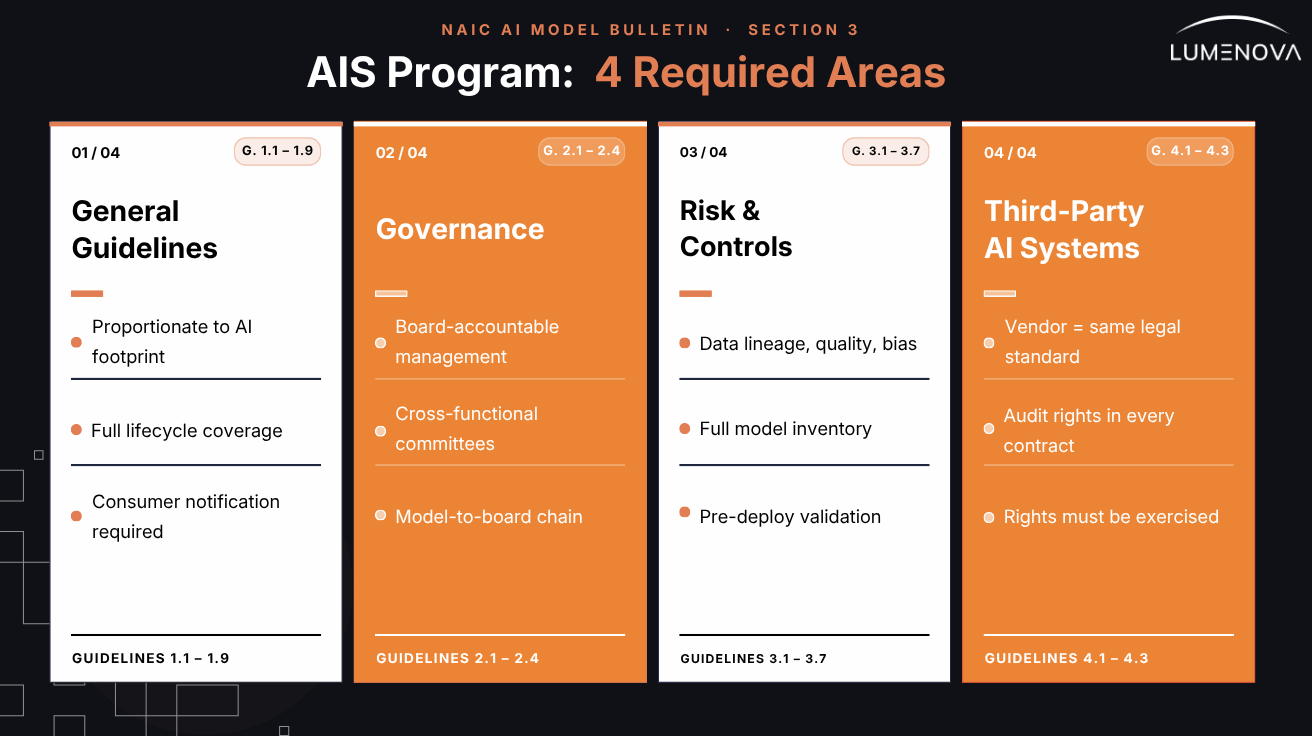

Section 3 of the bulletin defines the AIS Program, the written governance framework every insurer is expected to develop, implement, and maintain. It is organized into four areas.

If you are just beginning to think about what “real ” AI governance looks like in a regulated environment, our article on implementing responsible AI governance in modern enterprises is a good place to start. Here is what each area requires, and where the common gaps tend to appear:

1. General Guidelines (Section 3, Guidelines 1.1-1.9)

The AIS Program must be proportionate to the insurer’s actual AI footprint. A regional insurer using one vendor credit scoring model is not expected to build the same program as a national carrier running proprietary ML models across underwriting, claims, and fraud detection. Guideline 1.4 says it plainly: controls should be “tailored to and proportionate with the Insurer’s use and reliance on AI.”

The program must also cover AI across its full lifecycle, from design and development through validation, deployment, ongoing monitoring, updating, and retirement. Guideline 1.7 specifies all of those phases explicitly. A program that only covers deployment is incomplete.

One requirement that often gets missed: Guideline 1.9 requires that the program include processes for notifying consumers that AI systems are in use, with access to “appropriate levels of information based on the phase of the insurance life cycle.”

2. Governance (Section 3, Guidelines 2.1-2.4)

Governance under the bulletin means documented accountability, not an AI ethics statement on a website. Guideline 1.3 requires that responsibility for the AIS Program vest with senior management accountable to the board. That is the starting point. For a practical breakdown of what a governance structure actually needs to contain, our guide to the 7 components of an effective AI governance framework covers this in detail.

Beyond that, Guideline 2.3 gets specific: the insurer should establish committees drawing from business units, actuarial, data science, underwriting, claims, compliance, and legal. Scope of authority, chains of command, decisional hierarchies, and escalation protocols all need to be defined. And for predictive models specifically, Guideline 2.4 requires documented processes covering design, development, verification, deployment, use, updating, and monitoring, including “methods used to detect and address errors, performance issues, outliers, or unfair discrimination.”

The Governance Gap Most Insurers Have

Most insurers have a model risk policy. Fewer have documented accountability chains that run from individual models up to board-level oversight. The bulletin requires both. During an examination, regulators will ask for evidence of the chain, not just the policy.

(If your organization is still working through manual governance processes, 5 Signs You Need AI Compliance Software outlines exactly when the manual approach stops working.)

3. Risk Management and Internal Controls (Section 3, Guidelines 3.1-3.7)

This section covers data and model integrity. Guideline 3.2 requires documented data practices including currency, lineage, quality, integrity, bias analysis and minimization, and suitability. Every word in that list is something a regulator can ask for evidence on.

(For a comprehensive overview of bias types that insurers need to account for, see our article on 7 common types of AI bias and how they affect different industries.)

Guideline 3.3 goes further for predictive models: the insurer must maintain inventories of all models, detailed documentation of development and use, and assessments of interpretability, repeatability, robustness, reproducibility, traceability, and model drift. That is not a checklist. It is a documentation standard.

Guideline 3.4 addresses validation: testing must assess the generalization of outputs on implementation, comparing performance on unseen data to post-implementation results. Validation can take different forms, but it must be documented, and it must happen before deployment, not after.

See also: Fairness and Bias in Machine Learning: Mitigation Strategies for guidance on what independent validation should actually test for.

4. Third-Party AI Systems and Data (Section 3, Guidelines 4.1-4.3)

Guideline 4.1 requires that the insurer conduct due diligence to ensure decisions made by third-party AI systems will meet the legal standards imposed on the insurer itself. The vendor’s compliance is your problem.

Guideline 4.2 specifies what contracts with AI vendors should include: audit rights or entitlement to receive audit reports from qualified auditing entities, and a requirement that the third party cooperate with regulatory inquiries related to the insurer’s use of their product. If those provisions are not in your current vendor agreements, you have a gap the bulletin is explicitly designed to expose.

Guideline 4.3 requires actually exercising those rights, not just having them. Documented audits, confirmation processes, and evidence of follow-through. A contract clause you have never used does not demonstrate compliance.

What Regulators Will Actually Ask For

Section 4 of the bulletin is the most practically important part. It describes what regulators expect to see during any investigation or market conduct action involving AI. This is not hypothetical. These are the actual document requests you can expect. For those building audit-readiness from scratch, our blog post related to how to audit your AI policy for compliance and security risks provides a useful parallel process.

1. Your AIS Program Documentation

Regulators will ask for the written AIS Program itself, evidence of its adoption, its scope (including any AI systems not covered by it), and how it is tailored to the insurer’s specific risk profile. They will also ask for the policies, procedures, training materials, and governance records that demonstrate the program is actually implemented, not just written. (Reference: NAIC AI Model Bulletin, Section 4, Item 1.1)

2. Your Model Inventory and Validation Records

For any specific model under examination, Section 4 requires: documentation of compliance with all AIS Program policies in the model’s development and use; data source, provenance, lineage, quality, integrity, and bias analysis records; and the techniques, measurements, and thresholds used in development and validation. Model drift evaluation is specifically called out as a required component.

For a practical breakdown of what drift looks like in production and how to measure it, our article on AI drift types, causes and early detection is the right starting point.

3. Your Third-Party Vendor Files

If the examination involves a third-party AI system, regulators will separately request due diligence records on the vendor, the full vendor contract including representations, warranties, data security, IP rights, and cooperation clauses, and documentation of any audits or confirmation processes performed. This is a separate document request from your internal governance files. You need both. (Reference: NAIC AI Model Bulletin, Section 4, Items 2.1-2.4)

4. Reality Check

Section 4 closes with this: “Nothing in this bulletin limits the authority of the Department to conduct any regulatory investigation, examination, or enforcement action relative to any act or omission of any Insurer that the Department is authorized to perform.”

Translation: the bulletin describes a floor, not a ceiling. If regulators find something during an examination that is not covered here, they can still act on it.

6 Practical Steps: Build Backwards From the Examination

The most efficient way to approach compliance is to work backwards from Section 4. Build everything you would need to produce on short notice during a market conduct action, and maintain it continuously. Our step-by-step video guide on how to build an AI governance framework is a useful companion to the steps below.

Step 1: Inventory Every AI System in Scope

Start with a complete audit of every AI system used in regulated insurance decisions, proprietary and vendor-provided. Document the purpose, data inputs, decision scope, responsible owner, and risk tier for each. This is the foundation for everything else, and it is the first thing regulators ask for.

Step 2: Write an AIS Program Proportionate to Your Actual Risk

A small insurer using one vendor scoring model needs a different program than a carrier running a dozen proprietary ML models. The bulletin says “proportionate,” and that word matters. Proportionate does not mean minimal. It means calibrated to your actual Degree of Potential Harm for each AI use case.

Step 3: Fix Your Vendor Contracts Before the Next Renewal

Review every AI vendor agreement for audit rights and regulatory cooperation clauses. If they are not there, negotiate them in at the next contract renewal, or sooner if your risk exposure warrants it. A contract without those provisions is a documented gap that an examiner will find.

Step 4: Separate Your Validation Team From Your Development Team

The bulletin’s validation requirements imply independence. Bias testing and model validation done by the people who built the model, or accepted on a vendor’s assurance, will not satisfy what Section 3 Guideline 3.4 is asking for. Build an independent validation function, or contract one. Document everything it produces. This is exactly the kind of challenge an AI governance platform is designed to solve.

Step 5: Build Consumer Notice Into Your AI Workflows

Guideline 1.9 requires consumer notification that AI systems are in use. Adverse decision processes need plain-language explanations of how AI contributed to the outcome. If your systems cannot produce that, it is a gap, and it directly implicates consumer-facing regulatory obligations, not just internal governance. For context on what “explainable” really means in regulated settings, see our article on why explainable AI in banking and finance is key for compliance.

Step 6: Run Your Own Section 4 Audit Annually

Once a year, pull the Section 4 document list and ask: could we produce all of this within 30 days? Not “do we have a policy that covers this,” but could we produce the actual documentation? The gap between those two questions is usually where examination problems live.

Pre-Deployment Compliance Checklist (Tied to Bulletin Requirements)

Before any AI system goes live in a regulated insurance decision process, these items should be confirmed. Each maps directly to a specific bulletin requirement.

- ☐ AI system added to model inventory with owner, purpose, risk tier, and data inputs documented (Section 3, Guideline 3.3a)

- ☐ Degree of Potential Harm assessed and controls calibrated to that assessment (Section 3, Guideline 1.4)

- ☐ Independent validation completed, not by the development team (Section 3, Guideline 3.4).

- ☐ Bias analysis and minimization documented for all training and validation data (Section 3, Guideline 3.2). Related: Fairness and Bias in Machine Learning: Mitigation Strategies

- ☐ Governance approval obtained from the board-accountable committee or equivalent (Section 3, Guideline 1.3)

- ☐ Third-party vendor contract confirmed to include audit rights and regulatory cooperation clause (Section 3, Guideline 4.2)

- ☐ Consumer notice process confirmed for the relevant stage of the insurance lifecycle (Section 3, Guideline 1.9)

- ☐ Adverse decision explanation template prepared in plain language (Section 4, Item 1.1e)

- ☐ Ongoing monitoring plan in place with defined model drift thresholds and re-validation triggers (Section 3, Guideline 3.3c)

- ☐ Data lineage, provenance, and currency records ready for production on request (Section 4, Item 1.3b)

The Lumenova AI enterprise-grade AI platform with model version control automates and documents every one of them, so your team is exam-ready at all times.

Conclusion

The NAIC AI Model Bulletin is not the end of this story. It is the opening chapter. More states are adopting it. More enforcement actions are coming. And when the first high-profile case breaks, an insurer found liable for discriminatory AI outcomes they could not explain, everyone in the industry will quietly audit their own governance programs and wish they had started earlier. This is not speculation. Our analysis of the hidden AI risks facing banks and insurance companies shows exactly how those failures tend to surface.

The good news: you are reading this now. That means you still have time to build governance that actually works, not a policy document that lives in a shared drive, but real infrastructure, real accountability, and real monitoring.

Responsible AI is not the thing that slows you down. It is the thing that keeps you in the game. For a closer look at how leading organizations are operationalizing this, see features you need in an AI governance platform and implementing responsible AI governance in modern enterprises.

Frequently Asked Questions

Yes, but proportionately. The bulletin is addressed to all licensed insurers in adopting states, regardless of size. However, Guideline 1.4 explicitly requires that controls be “tailored to and proportionate with the Insurer’s use and reliance on AI.” A small insurer using a single vendor credit score is not expected to maintain the same program infrastructure as a large national carrier. The obligation is real; the scale is calibrated.

They are related but not identical. A predictive model is a specific type of AI system, one that uses historical data and algorithms to predict outcomes. All predictive models are AI systems under the bulletin’s definitions, but not all AI systems are predictive models. Generative AI, for example, is separately defined and subject to the same AIS Program requirements, but the validation and drift considerations differ. The bulletin calls this out specifically in Section 4: “The nature of validation, testing, and auditing should be reflective of the underlying components of the AI System, whether based on Predictive Models or Generative AI.”

It can be the foundation, but not a straight substitution. Guideline 1.5 allows the AIS Program to “adopt, incorporate, or rely upon, in whole or in part, a framework or standards developed by an official third-party standard organization, such as the NIST Artificial Intelligence Risk Management Framework, Version 1.0.” The key phrase is “in whole or in part.” The bulletin-specific requirements around predictive model documentation, consumer notice, and third-party vendor obligations still apply regardless of which external framework you build on.

Practically speaking, yes. Four states (California, Colorado, New York, and Texas) have their own separate AI frameworks already in effect. If you operate across state lines, those apply now. Beyond that, the adoption pace since December 2023 suggests most remaining states will act. Building a compliant AIS Program now costs far less than retrofitting one under examination pressure.

The bulletin does not mandate specific contract terms. Guideline 4.2 says “where appropriate and available.” But it also requires that the insurer conduct due diligence to ensure third-party AI systems meet the same legal standards the insurer itself is subject to. If a vendor will not cooperate with audits or regulatory inquiries, that is a due diligence finding, and deploying that system without mitigation creates documented exposure. Regulators can and will ask how you assessed a vendor’s compliance when that vendor’s system produced an adverse consumer outcome.

The Lumenova AI Platform is built to operationalize the specific requirements in the bulletin’s Section 3 and Section 4: automated model inventory management mapped to the bulletin’s documentation standards, independent bias testing workflows, model drift monitoring with configurable thresholds, third-party vendor assessment tools, and audit-ready reporting aligned to examination request formats. It is designed so that when Section 4 document requests arrive, the production process takes hours, not weeks.